How to Use Jarvis, Microsoft’s One AI Bot to Rule Them All

With all the buzz around chatbots like ChatGPT, it’s easy to forget that text-based chat is just one of many AI capabilities. An ideal generative AI can work across different models as needed to interpret and generate images, sounds, and videos.

Enter Jarvis, Microsoft’s new project that promises one bot to rule them all. Jarvis uses ChatGPT as the system’s controller, and can use various other models as needed to respond to prompts.and paper (opens in new tab) In a Cornell University publication, Microsoft researchers (Yongliang Shen, Kaitao Song, Xu Tan, Dongsheng Li, Weiming Lu, and Yueting Zhuang) explain how this framework works. A user sends a request to the bot, the bot plans a task, selects the models it needs, lets those models perform the task, then generates and publishes a response.

The diagram below provided in the research paper shows how this process works in the real world. A user asks the bot to create an image of a girl reading a book, and she is in the same position the boy is in the sample image. The bot plans the task, uses the model to interpret the boy’s pose in the original image, unfolds another model and draws the output.

microsoft I have a Github page (opens in new tab) You can download and try Jarvis on your PC with Linux. The company recommends using Ubuntu (especially the older version 16 LTS), but I was able to get the main feature: a terminal-based chatbot that works on Ubuntu 22.04 LTS and the Windows Subsystem for Linux.

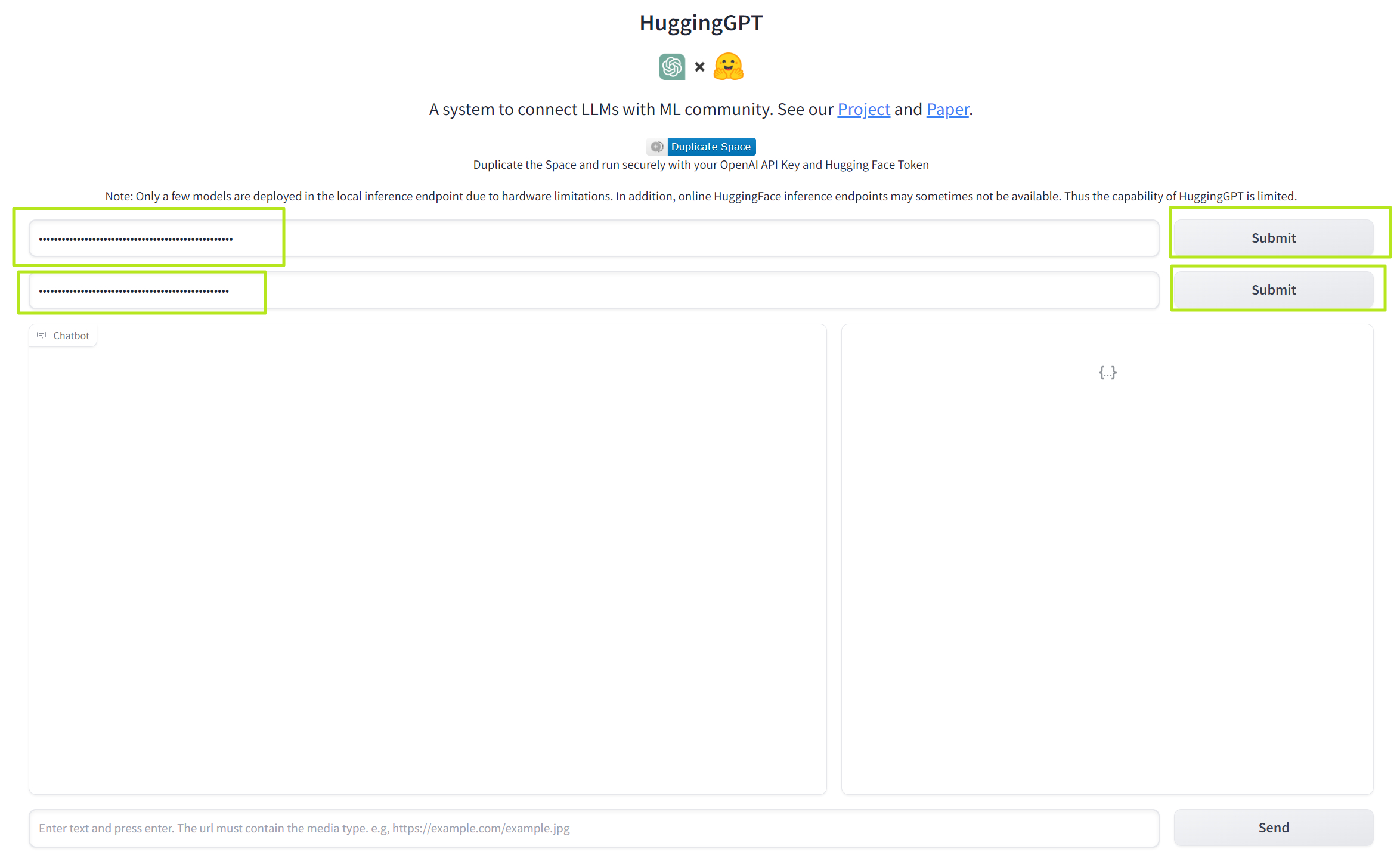

However, unless you really like the idea of messing around with configuration files, the best way to checkout Jarvis is Hug GPT (opens in new tab)is a web-based chatbot researched by Microsoft, set up at Hugging Face, an online AI community that hosts thousands of open source models.

Follow the steps below to have a working chatbot that can display images and other media and ask you to output images. Note that, like other bots I’ve tried, the results were very mixed.

How to setup and try Microsoft Jarvis / HuggingGPT

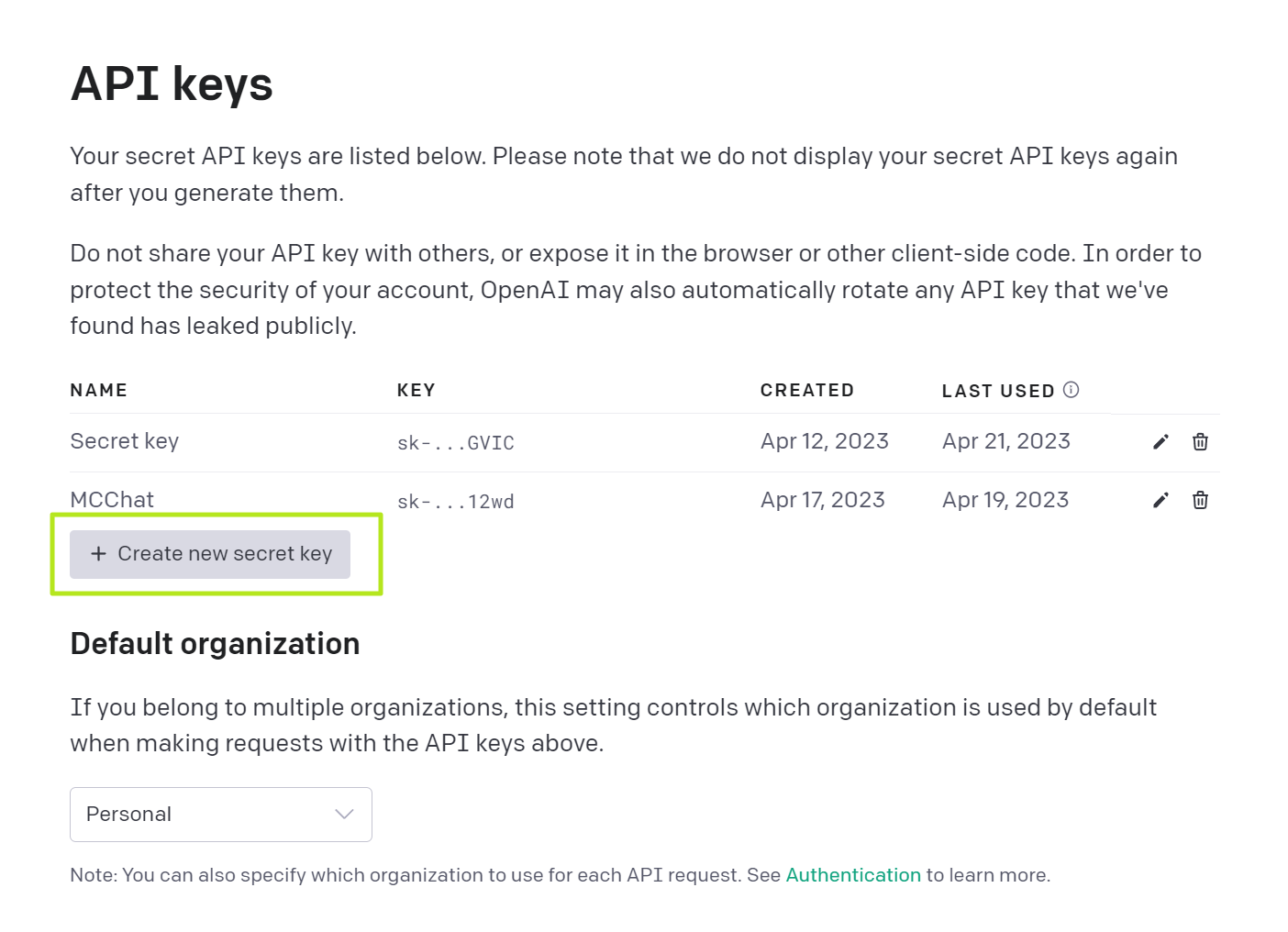

1. Get your OpenAPI API key If you don’t already have one.available at OpenAPI website (opens in new tab) sign in[Create new secret key]Click. Signing up is free and you get free credits, but you have to pay extra when you use them up. Save the key in an easily accessible location, such as a text file. Once copied, you can never get it again.

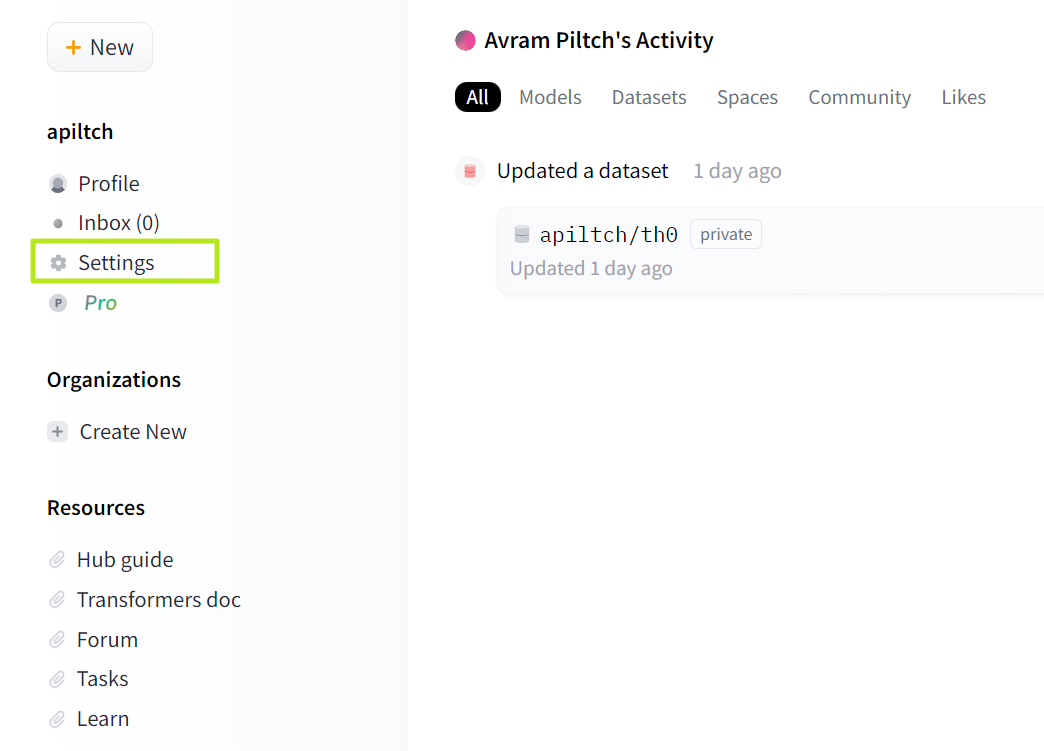

2. Sign up for a free account with Hugging Face Don’t have it yet? login to the site.the site is Found at huggingface.co (opens in new tab) Not huggingface.com.

3. [設定]->[アクセス トークン]Go to Click the link on the left rail.

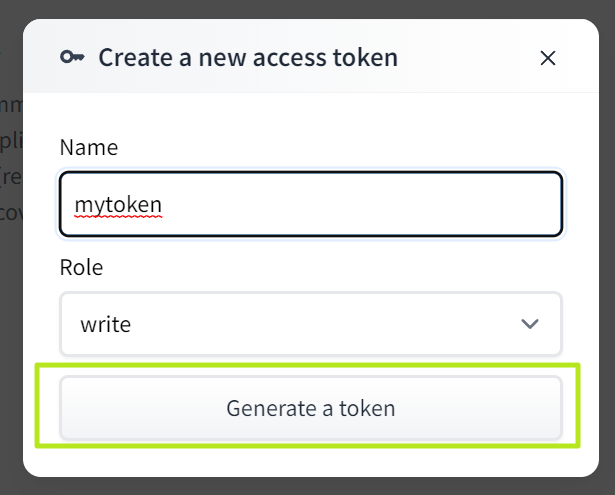

Four. [新しいトークン]Click.

![[新しいトークン]Click](https://cdn.mos.cms.futurecdn.net/Tbf6mtG9KFqPmmFNgnwAxh.png)

Five. name the token (anything), Select “Write” as a role [生成]Click.

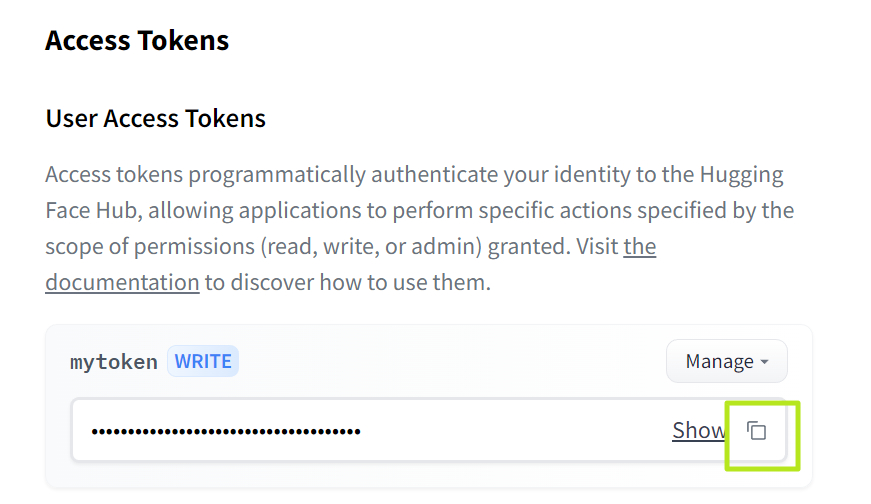

6. Copy your API key Keep it in an easily accessible place.

7. Go to Hug GPT page (opens in new tab)

8. Paste your OpenAPI key and hug face token to the appropriate field.after that press the send button next to each other.

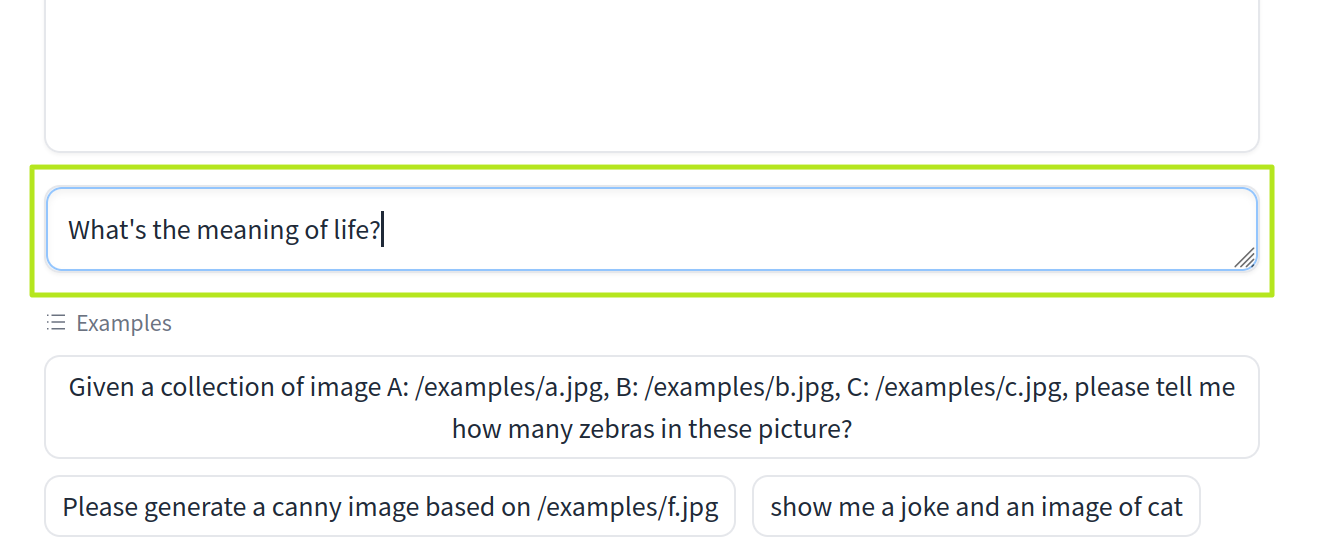

9. enter the prompt in the query box [送信]Click.

How to setup Jarvis/Hugging GPT on Linux

Using HuggingGPT on the Hugging Face website is much easier. However, if you want to install it on your local Ubuntu PC, here is how you can do it. You can also modify it to use more models.

1. install git If you don’t already have one.

sudo apt install git2. Clone the Jarvis repository from your home directory.

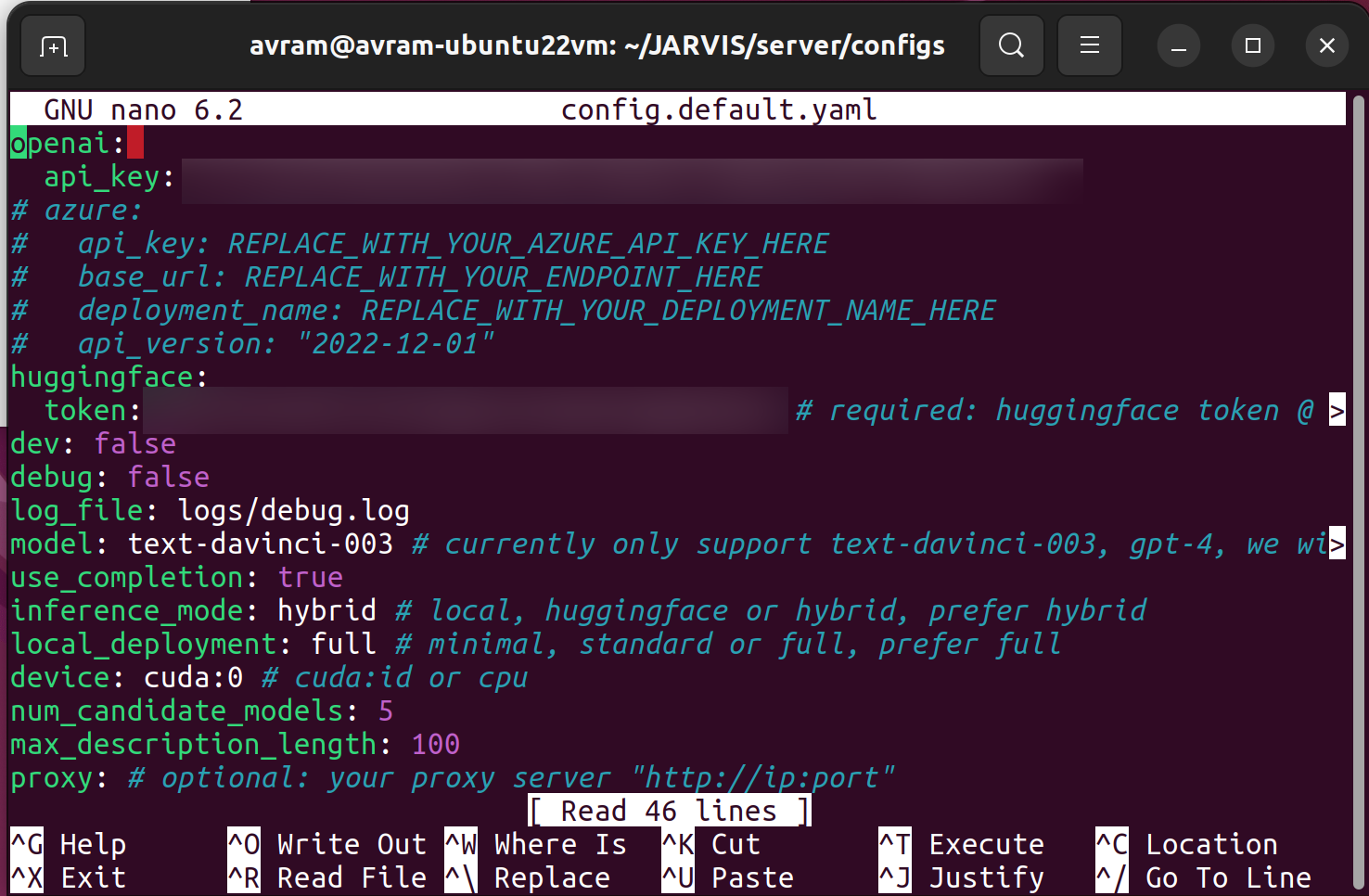

git clone https://github.com/microsoft/JARVIS3. Go to Jarvis/server/configs folder..

cd JARVIS/server/configsFour. Edit the configuration file Enter your OpenAI API key and Hugging Face token if needed. config.azure.yaml, config.default.yaml, config.gradio.yaml, config.lite.yaml. This how-to uses only gradio files. It makes sense to edit them all. Can be edited using nano (eg nano config.gradio.yaml). If you don’t have these API keys, you can get them. Free from OpenAI (opens in new tab)and hugging face (opens in new tab).

Five. install miniconda If you haven’t installed it yet. You should download the latest version from miniconda site (opens in new tab)After downloading the installer, go to the Downloads folder and install it by typing: bash followed by the installation script name.

bash Miniconda3-latest-Linux-x86_64.shYou will be prompted to accept the license agreement and confirm the installation location. After installing Miniconda, close all terminal windows and reopen them so that the command conda is in the file path. If it’s not in your path, try rebooting.

6. Go back to JARVIS/server directory.

7. Create and activate a jarvis conda environment.

conda create -n jarvis python=3.8

conda activate jarvis8. Install some dependencies and models.

conda install pytorch torchvision torchaudio pytorch-cuda=11.7 -c pytorch -c nvidia

pip install -r requirements.txt

cd models

bash download.sh # required when `inference_mode` is `local` or `hybrid`. 9. Go back to the JARVIS/servers folder.

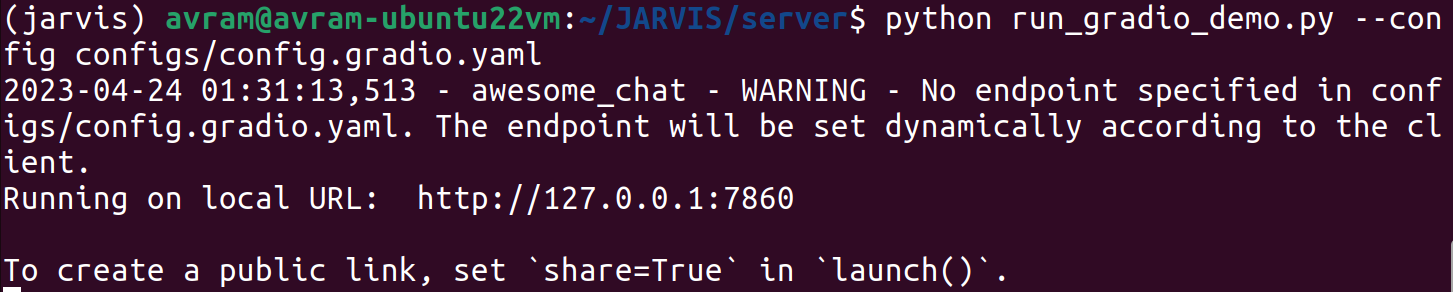

Ten. Run the command to start the HuggingGPT local web server. using gladio.

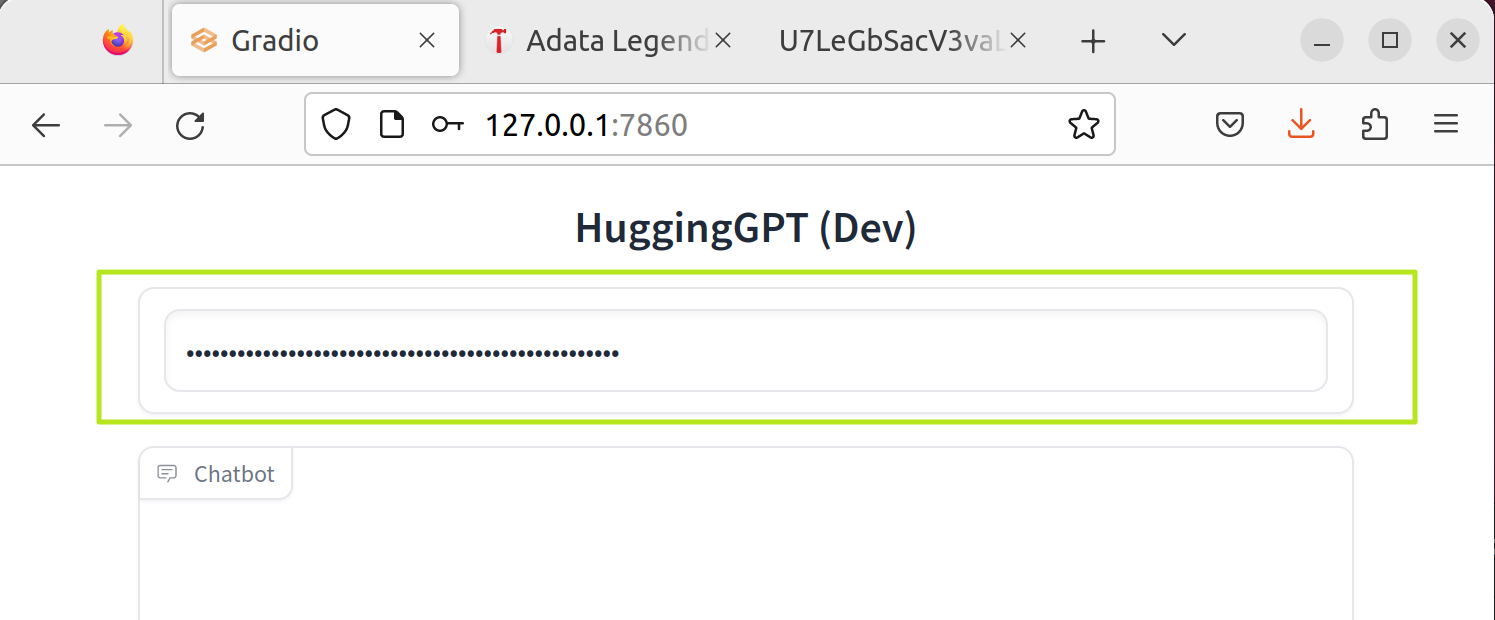

python run_gradio_demo.py --config configs/config.gradio.yamlYou should see a local URL that you can access in your web browser. In my case it was http://127.0.0.1:7860.

11. Access URL (e.g. http://127.0.0.1:7860) in your browser. If you’re using Ubuntu in a VM, use the browser inside the VM.

12. Enter your OpenAPI API key in the box at the top of the webpage.

13. enter the prompt Press Enter in the prompt box.

Using the gradio server is just one possible way to interact with Jarvis on Linux.of Jarvis Github Page (opens in new tab) You have more choices. These include using the model server and starting a command-line based chat.

I couldn’t get most of these methods to work (command line chat worked fine, but the interface wasn’t as good as web chat). Also, you might be able to install more models and start generating text-to-video (I couldn’t).

Things to try with Jarvis / Hugging GPT

The bot can answer image, audio, and video queries in addition to standard text questions. It can also generate images, sounds, or videos. Because if you use the web version, you may be limited by the free models accessible from Hugging Face. In the Linux version, you may be able to add some models.

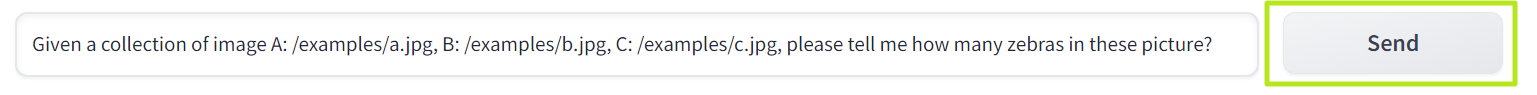

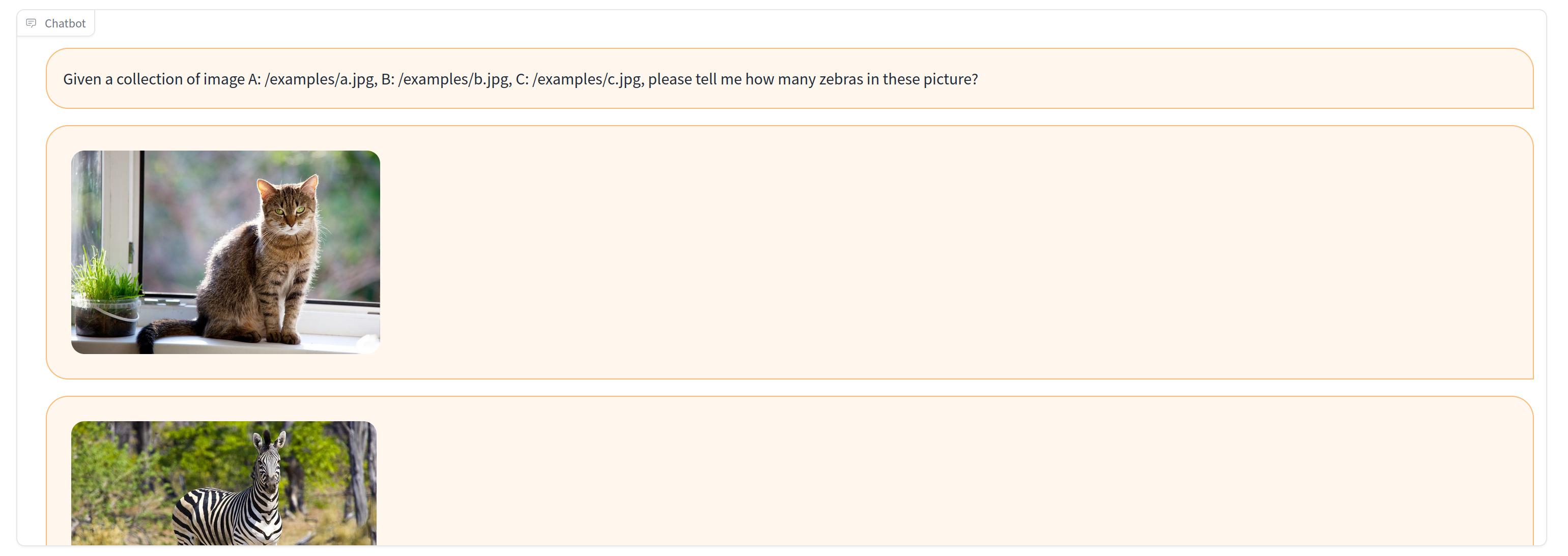

There are some sample queries listed below the prompt box that you can click to try. These could be input with three sample images and asked to count zebras, jokingly shown a picture of a cat, or asked to generate an image similar to another image. included.

Since it’s web-based, the way to feed images is to submit the URL of an online image. However, if you have the Linux version available, you can store the image locally in the JARVIS/server/public folder and reference it with a relative URL (e.g. /myimage.jpg is public The folder /examples/myimage.jpg is in the examples subfolder. public).

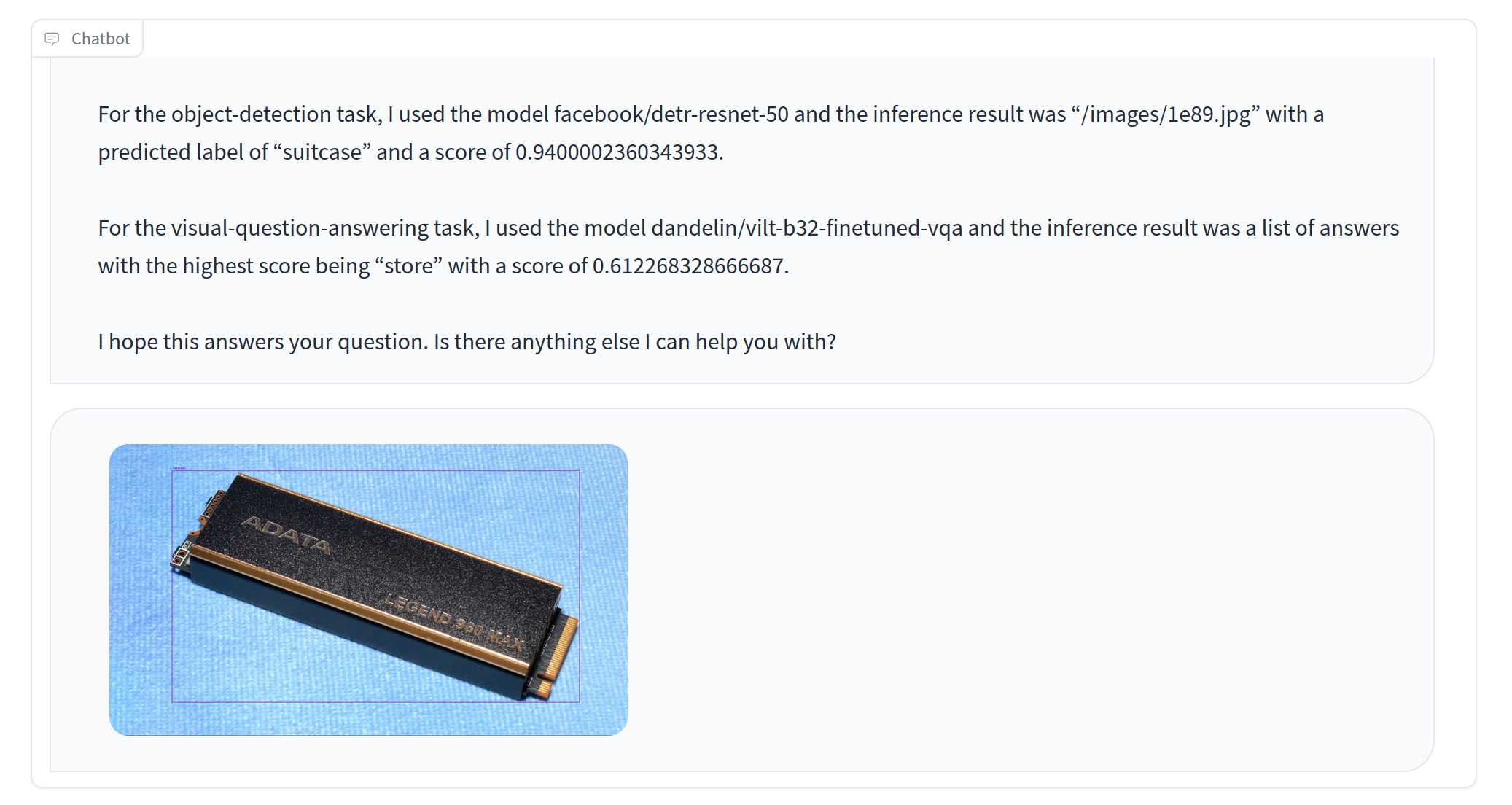

Most of the original queries I tried didn’t work particularly well. Image recognition was particularly bad. I sent a picture of the M.2 SSD and asked where I could buy it, and they identified the SSD as one of the suitcases and told me to find a ‘dealer’.

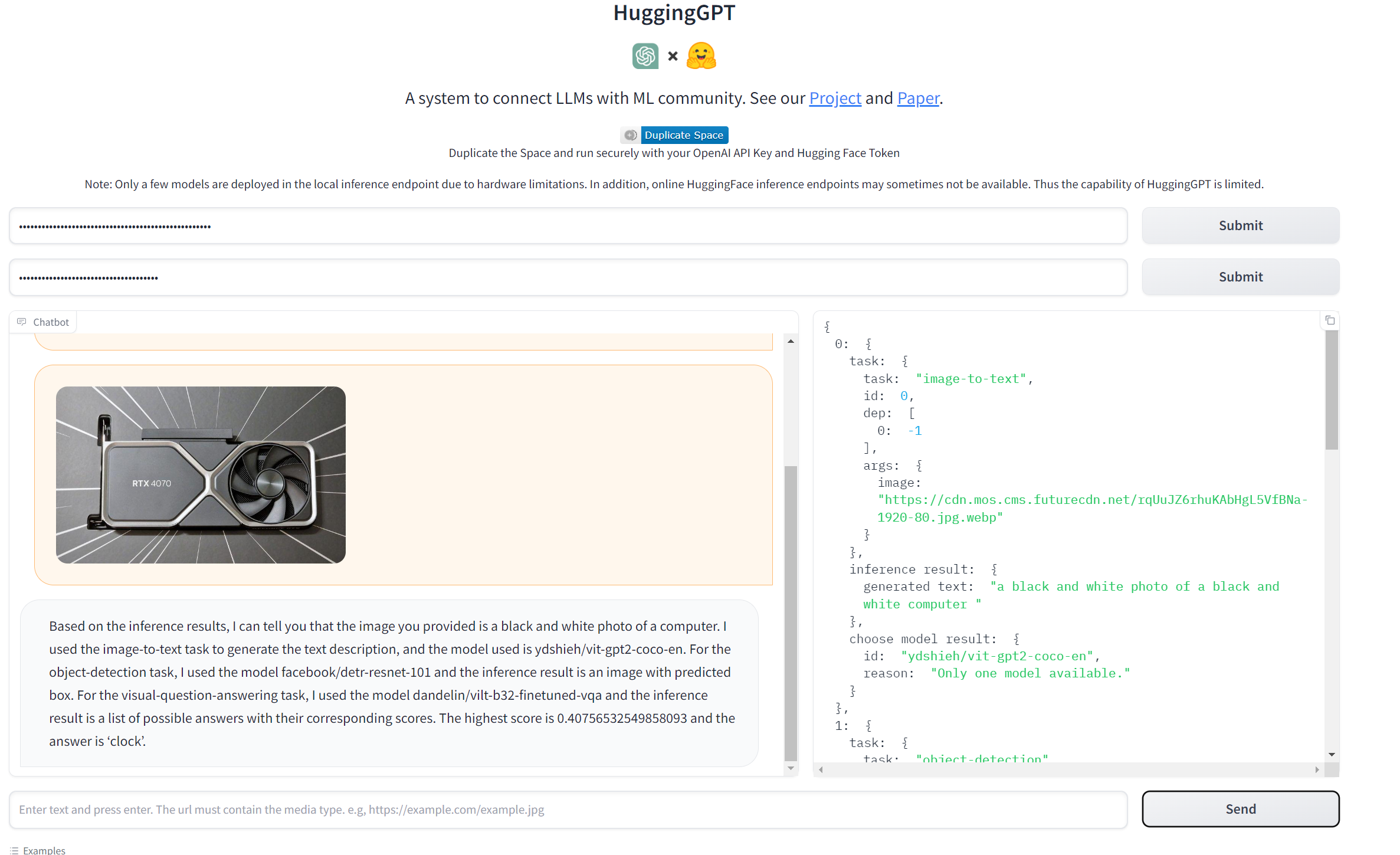

Similarly, when I sent a screenshot of Minecraft and asked where I could buy one, it falsely claimed to have seen a kite flying in the sky. I thought the RTX 4070 was a black and white photo of a computer. And when I asked where I could buy it, they said, “You can buy any of these items from their online store or from various retailers nearby.” However, there was no actual link to the actual online store.

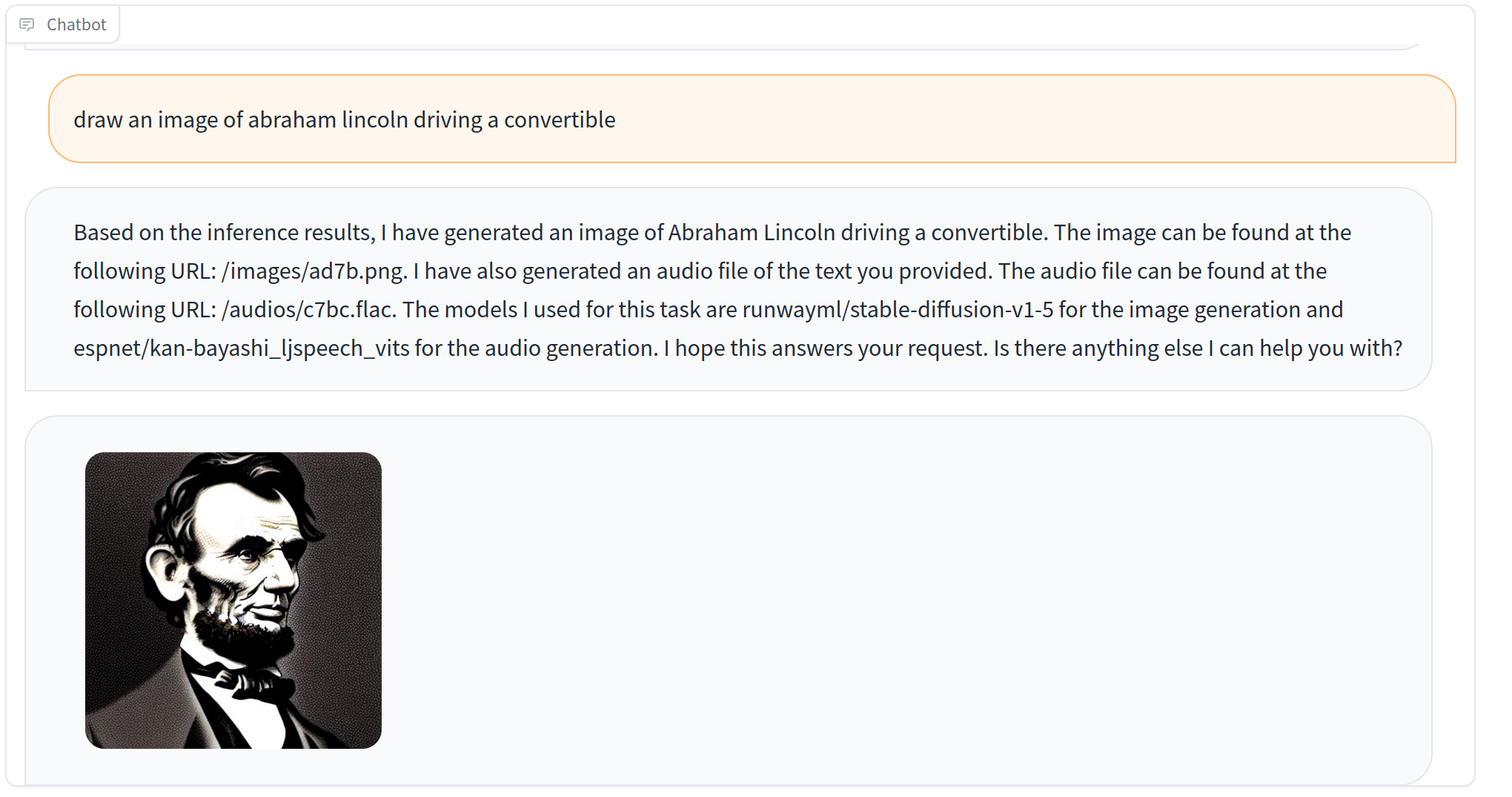

I wasn’t very good at generating images on demand. For example, Abraham driving a convertible he asked to draw Lincoln, and a simple bust of the former president was displayed.

In short, most queries didn’t work very well, except for the specific examples Microsoft suggests. But as with other AI frameworks such as Auto-GPT and BabyAGI, the problem lies in the model you use, and the better the model, the better the output. If you want to experiment with autonomous agents, check out our tutorials on how to use Auto-GPT and how to use BabyAGI.