How To Create Your Own AI Chatbot Server With Raspberry Pi 4

I’ve shown you before what you can do. Run ChatGPT on Raspberry Pibut the problem is that the Pi is serving the client side and sending all the prompts to someone else’s powerful server in the cloud. It is possible to create similar AI chatbot experiences that use the same kind of LLaMA language model that powers AI in other services.

The core of this project is llama.cpp by Georgi GerganovWritten in the evening, this C/C++ model is fast enough for general use and easy to install. It runs on Mac and Linux machines.In this how-to, we tweak Gerganov’s installation process to make the model Raspberry Pi 4. If you need a faster chatbot and have a computer with an RTX 3000 series GPU or higher, check out the following articles: How to run a bot like ChatGPT on your PC.

managing expectations

Before I get into this project, I need to manage your expectations: LLaMA on Raspberry Pi 4 is slow. Chat prompts can take a few minutes to load and responses to questions can take as long. This is more of a fun project than a mission critical use case.

What you need for this project

- Raspberry Pi 4 8GB

- PC with 16 GB RAM running Linux

- 16GB or larger USB drive formatted as NTFS

Setting up the LLaMA 7B model with a Linux PC

The first section of the process is to set up llama.cpp on your Linux PC, download the LLaMA 7B model, convert it, and copy it to a USB drive. The extra power of a Linux PC is needed to convert the model, as the Raspberry Pi’s 8 GB of RAM is not enough.

1. Open a terminal on your Linux PC and make sure git is installed.

sudo apt update && sudo apt install git2. Clone the repository using git.

git clone https://github.com/ggerganov/llama.cpp3. Install a set of Python modules. These modules work with models to create chat bots.

python3 -m pip install torch numpy sentencepieceFour. Make sure you have g++ and build essentials installed. These are required to build a C application.

sudo apt install g++ build-essentialFive. In Terminal, change directory to llama.cpp.

cd llama.cpp6. Build the project file. Press Enter to run.

make7. Download the Llama 7B torrent using this link. I used qBittorrent to download the models.

magnet:?xt=urn:btih:ZXXDAUWYLRUXXBHUYEMS6Q5CE5WA3LVA&dn=LLaMA8. Adjust the download so that only 7B and tokenizer files are downloaded. Other folders contain large models that are hundreds of gigabytes in size.

9. Copy 7B and tokenizer files to /llama.cpp/models/.

Ten. Open a terminal and navigate to the llama.cpp folder. It should be in your home directory.

cd llama.cpp11. Convert the 7B model to ggml FP16 format. Depending on your PC, this process may take some time. This step alone requires 16GB of RAM. Load the entire 13GB models/7B/consolidated.00.pth file into RAM as a pytorch model. When I try this procedure on an 8GB Raspberry Pi 4, I get an illegal instruction error.

python3 convert-pth-to-ggml.py models/7B/ 112. Quantize the model to 4 bits. This will reduce the size of the model.

python3 quantize.py 7B13. Copy the contents of /models/ to a USB drive.

Run LLaMA on Raspberry Pi 4

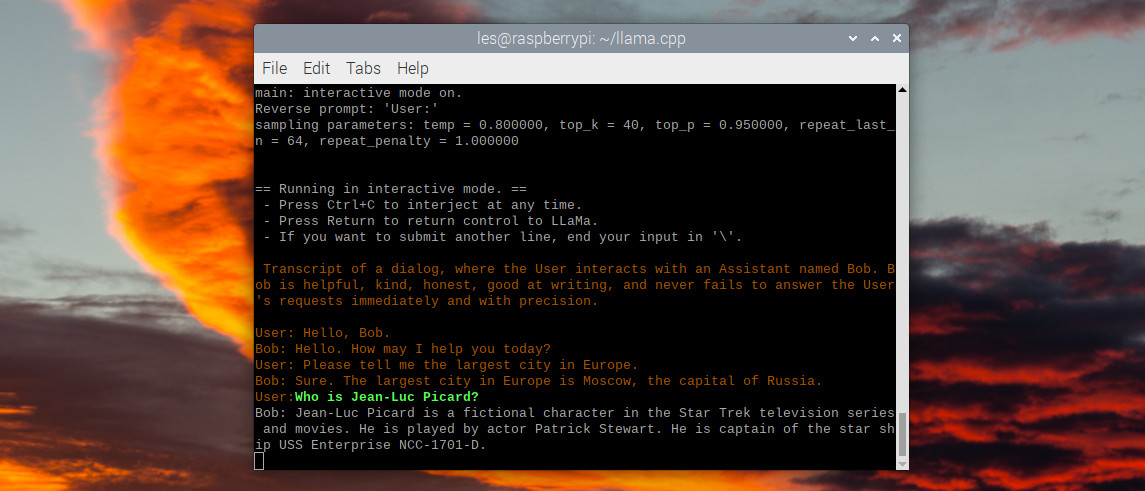

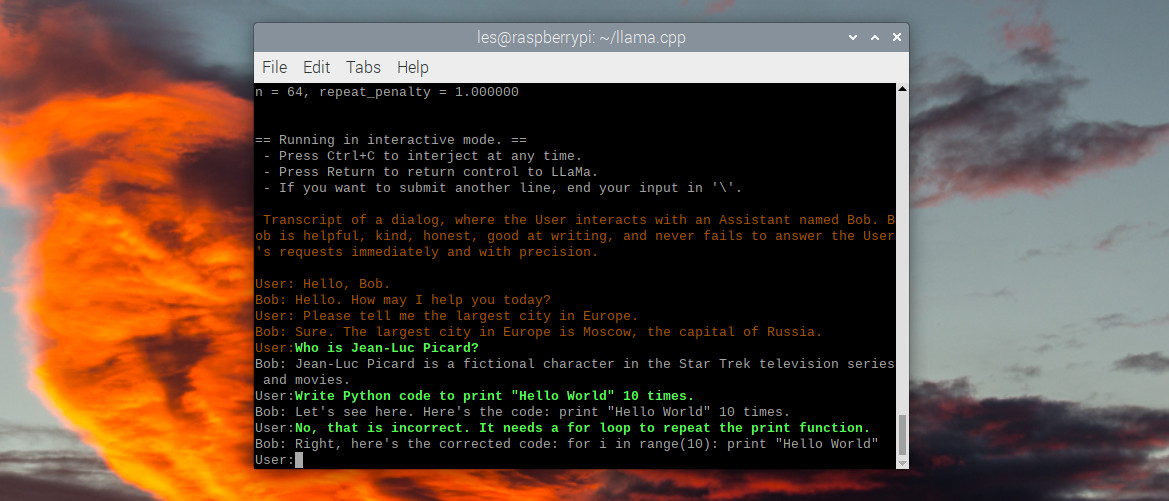

In this last section, we will repeat the llama.cpp setup on the Raspberry Pi 4 and copy the model using a USB drive. Then load an interactive chat session and ask “Bob” a series of questions. Please don’t ask me to write Python code. Step 9 of this process can be done on a Raspberry Pi 4 or Linux PC.

1. Boot the Raspberry Pi 4 on your desktop.

2. Open a terminal and make sure git is installed.

sudo apt update && sudo apt install git3. Clone the repository using git.

git clone https://github.com/ggerganov/llama.cppFour. Install a set of Python modules. These modules work with models to create chat bots.

python3 -m pip install torch numpy sentencepieceFive. Make sure you have g++ and build essentials installed. These are required to build a C application.

sudo apt install g++ build-essential6. In Terminal, change directory to llama.cpp.

cd llama.cpp7. Build the project file. Press Enter to run.

make8. Insert a USB drive and copy the files to /models/. This will overwrite all files in the model directory.

9. Start an interactive chat session with “Bob”. A little patience is required here. The 7B model is lighter than the others, but it is still quite a heavy model for the Raspberry Pi to digest. It may take several minutes to load the model.

./chat.shTen. Ask Bob a question and press Enter. I asked you to tell me about Jean-Luc Picard from Star Trek: The Next Generation. Press CTRL+C to exit.