AMD Puts Hopes on Packaging, Memory on Logic, Optical Comms for Decade Ahead

Earlier this week at ISSCC 2023, AMD discussed the future of computing over the next decade. CEO Dr. Lisa Su said, lead presenter It also showed that AMD has delivered impressive performance in supercomputer, server and GPU performance trends over the last few decades. But perhaps more interesting is a well-conceived plan that shows how AMD will continue to pedal and aim to use advanced technology to counteract the benefits of shrinking semiconductor processes.

In the performance slide above, AMD claims to have successfully doubled the performance of mainstream servers every 2.4 years since 2009. However, it does not share any forecasts for this market. AMD is confident to take a closer look at GPU performance trends in the future (slide 2 in the gallery above). Here you can see that since 2006 he claims that GPU performance is doubling every 2.2 years. The graph shows that this trend will continue until at least 2025.

AMD’s supercomputer performance has been the most successful in terms of progress over time, and the last graph above shows AMD processors doubling supercomputer performance every 1.2 years since the late 90s. It shows that you have contributed to Additionally, AMD predicts that it will reach zetascale supercomputer performance in about 10 years. AMD has also taken its time to highlight efficiency improvements and a fierce battle to maintain Moore’s Law amid a gradual decline in logic density.

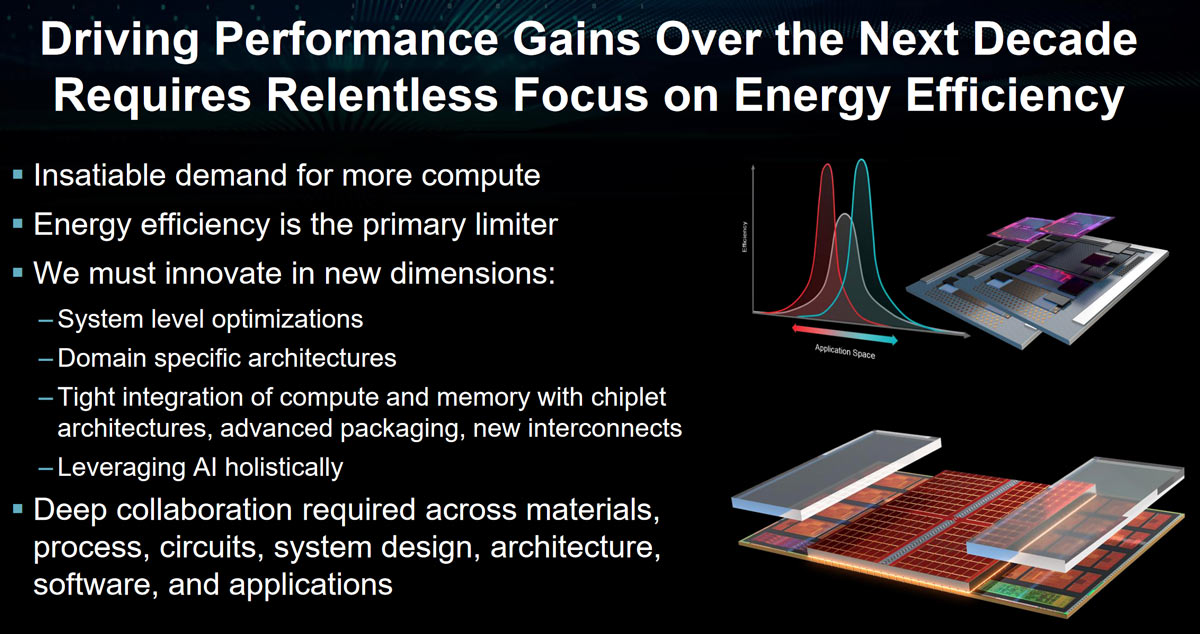

AMD has generously laid out some of its major plans to help drive efficiency and performance improvements over the next decade. will be the driving force behind We’ve already seen some of the consequences of AMD going this route with chiplets and 3D V-Cache, and it’s likely to continue.

Some advanced packaging avenues being considered include the integration of 3D CPU and GPU silicon “for next-level efficiency”. Additionally, AMD believes that “tighter integration of compute and memory” will result in higher bandwidth at lower power. AMD also targets in-memory processing. A slide shared at ISSCC showed a processor with an HBM module stacked on top. According to AMD, being able to run key algorithmic kernels in-memory significantly reduces system strain and latency.

Another big goal for efficiency savings, and thus potential performance gains, is chip I/O and communications. Specifically, the use of tightly integrated optical communication with computational dies promises valuable efficiency gains.

AMD has also taken the time to brag about the AI performance improvements brought to it by its processor portfolio over the past decade. The presentation covered several use cases for AI computing and highlighted the potential performance gains that AI can bring to simulations.

Of course, AMD isn’t the only one looking to the benefits of advanced packaging, chiplets, die stacking, in-memory computing, optical computing, and AI acceleration. That said, it has solid plans for fierce competition from its rivals, and it would be nice to produce even faster and more efficient chips for PC enthusiasts.