AWS Uses Intel’s Habana Gaudi for Large Language Models

Intel’s Habana Gaudi offers some competitive performance and comes with the Habana SynapseAI software package, but it still falls short when compared to Nvidia’s CUDA-enabled computing GPUs. This, combined with its limited availability, is the reason why Gaudi is not so popular for large-scale language models (LLMs) like ChatGPT.

Now that the AI rush has begun, Intel’s Habana is seeing more widespread adoption. Amazon Web Services decided to try Intel’s 1st Gen Gaudi with PyTorch and DeepSpeed for training LLM. The results were encouraging enough to commercially offer DL1 EC2 instances.

Training large language models (LLMs) with billions of parameters presents challenges. Special training techniques are required to account for the memory limitations of a single accelerator and the scalability of multiple accelerators working together. AWS researchers have developed DeepSpeed, an open-source deep learning optimization library for PyTorch designed to alleviate some of the LLM training challenges and accelerate model development and training, and Intel Habana Gaudi-based of Amazon EC2 DL1 instances were used for work. The results they have achieved look very promising.

The researchers built a managed computing cluster using AWS Batch. The cluster consists of 16 dl1.24xlarge instances, each with 8 Habana Gaudi accelerators and 32 GB of memory, with full card-to-card total bandwidth of 700 Gbps each It has a mesh RoCE network. The cluster was also equipped with 4 AWS Elastic Fabric adapters with a total interconnect of 400 Gbps between nodes.

On the software side, the researchers used DeepSpeed ZeRO1 optimization to pre-train a BERT 1.5B model with different parameters. The goal was to optimize training performance and cost effectiveness. To ensure model convergence, the hyperparameters were tuned, the effective batch size per accelerator was set to 384, and 16 microbatches per step and 24 steps of gradient accumulation were set.

Intel HabanaGaudi’s scaling efficiency tends to be relatively high, running BERT 340 million models on 8 instances and 64 accelerators, never dropping below 90%.

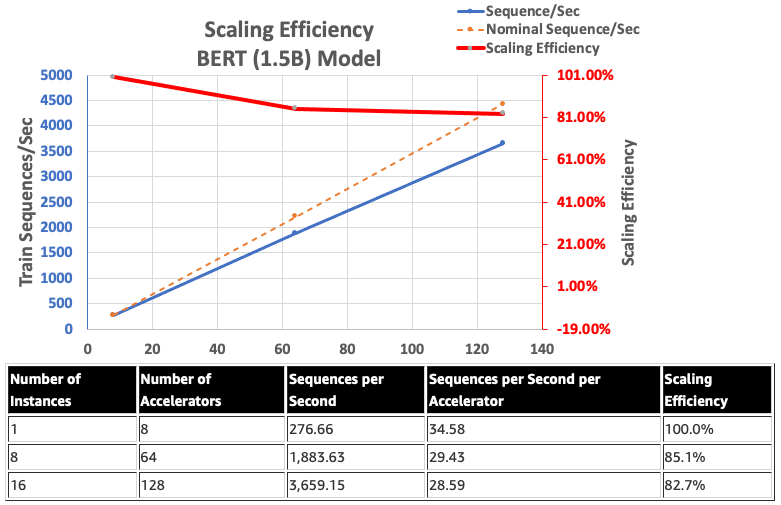

Meanwhile, AWS researchers used Gaudi’s native BF16 support to reduce memory size requirements and improve training performance compared to FP32, achieving BERT 1.5 billion models. They achieved a scaling efficiency of 82.7% across 128 accelerators using DeepSpeed ZeRO stage 1 optimization of BERT models with 340-1.5 billion parameters.

In general, AWS researchers pre-trained a BERT model with 1.5 billion parameters in less than 16 hours and delivered 128 found that convergence can be reached in a network of Gaudi accelerator achieves 85% scaling efficiency. Architecture can be evaluated in the following ways: AWS Workshop.