Erasing Authors, Google and Bing’s AI Bots Endanger Open Web

With the large-scale growth of Chat GPT Google and Microsoft, which make headlines on a daily basis, have responded by showing off AI chatbots built into their search engines. It is self-evident that AI is the future. But the future of what?

At Tom’s Hardware, we strive to push the boundaries of technology to see what’s possible. So on a technical level, I am impressed with how human the chatbot responses are. AI is a powerful tool that can be used to enhance human learning, productivity, and enjoyment. But both Google’s Bard bot and Microsoft’s “New Bing” chatbot are based on false and dangerous premises. That means readers don’t care where their information comes from or who is behind it.

Both companies’ AI engines, built on information from human authors, are positioned as alternatives to articles they learn. You may end up with a more closed web with less free information and fewer experts who can give you good advice.

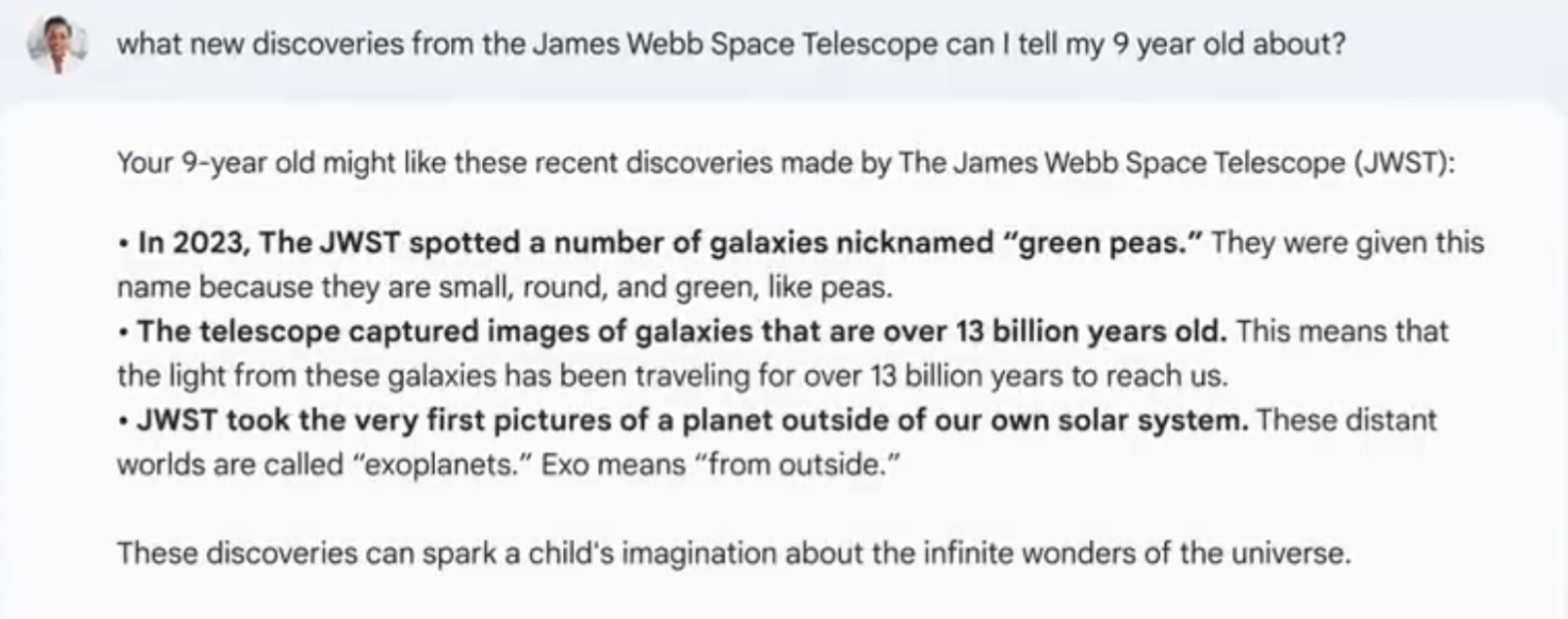

Google demonstrated Bard in both tweets and live-streamed events, providing not only fact-based answers but also recommendations. The company was embarrassed when one of Bard’s answers turned out to be inaccurate, but Bard and New Bing’s problem goes far beyond inaccuracy.

give advice without an advisor

At the live event, Google SVP Prabhakar Raghavan said Bard is well suited for answering queries classified as NORA (No One Right Answer). “For questions like this, perhaps we want to explore diverse opinions and perspectives and connect to the breadth of wisdom on the web,” he said. It can organize a lot of information and multiple perspectives.”

“The expanse of the web” really means that bots are sipping data from millions of articles written by unacknowledged and unpaid humans. . But Bard’s output doesn’t tell us that.

Raghavan indicated that Google answers “What are the best constellations to look for when stargazing?” Instead of showing him the best articles from the web on this topic, the search engine shows his own mini-articles with their own set of suggested constellations. There are no citations to indicate where these “multiple points of view” came from, and no authority to back up the claim.

Bard said: Here are some popular ones. ’ How do we know these are popular, and that they are the best constellations? On whose authority is the bot saying this? you are supposed to trust.

But trust is hard to come by. The company’s tweet indicated an embarrassing situation in which Bard provided a list of “new discoveries” from his James Webb Space Telescope (JWST), but one of his three bullet points was actually It’s not a completely new discovery.according to NASAthe first photo of an exoplanet was actually taken in 2004, long before the JWST was launched in 2021.

Many critics are rightfully concerned that chatbot results may be untruthful, but one can speculate that as technology improves, so does its ability to weed out mistakes. The bot is providing advice that seems to come out of nowhere. However, it is clear that Bard obtained and edited content from human writers he did not trust.

Decrease the value of expertise, authority and trust

Ironically, Google’s initiative runs counter to important criteria that Google says it prioritizes in organic search rankings. The company advises web publishers to consider: EEAT (Experience, Expertise, Authority, Trust) Determines which articles appear at the top of the page and which appear at the bottom. Criteria such as the author’s biography, whether the author describes relevant experience, and whether the publication has a trustworthy reputation are considered. By definition, a bot cannot meet either of these criteria.

Human content becomes most important when there is no single correct answer. We seek advice from a trusted source, someone with topical expertise and an experienced set of expert opinions.

Once you know the author, you can judge the credibility of the information. So when I go to family gatherings, relatives approach me and ask questions like, “Which laptop should I buy?” They could (and maybe should) do a web search of her for the question, but they know me and trust me that I won’t lead them in the wrong direction. increase.

In a world where everyone has an opinion and speaks up on social media, Google wants expertise to become less important to users. The company expects users to read everything they see at the top of their screens, believe it as much as anything else, and stay on the site, continuing to see monetized ads uninterrupted. When you leave the search engine, you are visiting another website for which Google may or may not be responsible for serving ads.

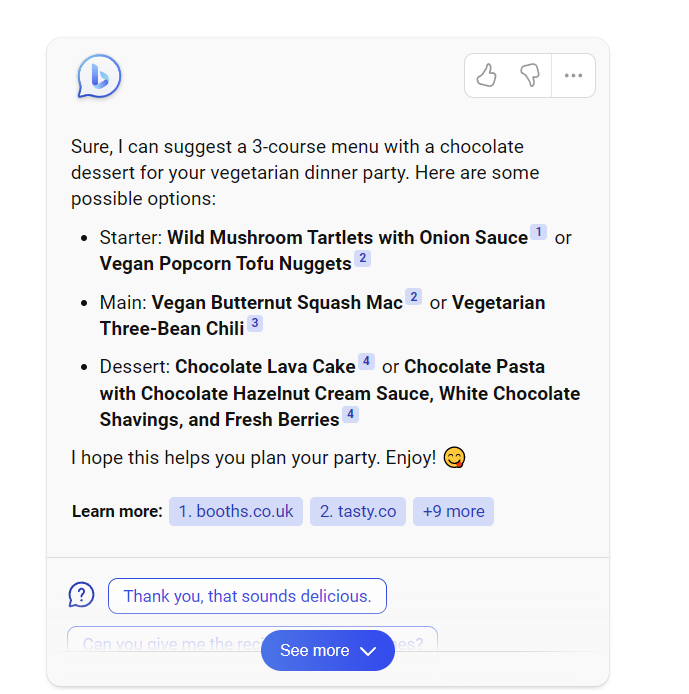

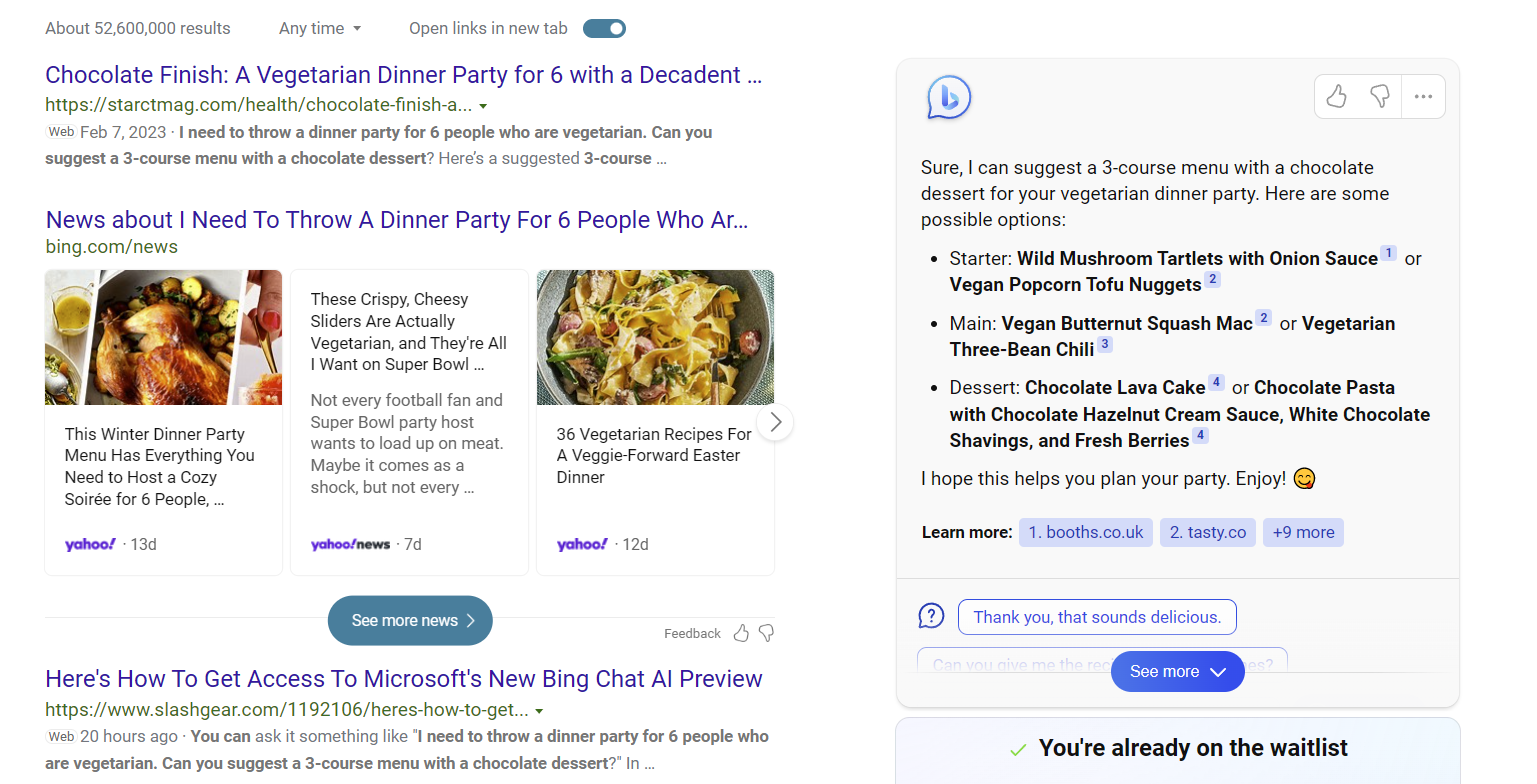

Bing shows sources but fills them in

Bing’s new chatbot implementation is slightly better than Google’s in actually showing the source of the information, but they’re embedded in small footnotes and don’t click a button to expand the answer. Some items are not displayed for a limited time.

Even if the quotes in Bing’s chatbot were more prominent, they still offer advice you’d be better off getting from an expert. In the example above, the bot is pairing a 3-course vegetarian dinner with a chocolate dessert. But how do you know that these are the best recipes, or that these food combinations are a good one?

The top of this search result was written by a human who has cooked before and thought about this set of dishes in the same way a human would, and I’d like to give it my immediate credit. However, to Bing’s credit, Bing puts its own content next to organic search results instead of pushing them down.

One might argue that the demo topics chosen by Google and Microsoft are highly subjective. Some people consider Orion to be the most visible constellation, while others choose Ursa Major. And that’s exactly why authors matter.

We are all guided by our prejudices, and the best authors embrace and disclose their biases rather than trying to hide them. If you’ve read my laptop reviews, you know that touch typing is important to me. I’ve tried hundreds of laptop keyboards. You can tell which ones are mushy and which ones are nice and snappy to the touch. And if you ask me which model is best, keyboard comfort factor into my recommendations. If you don’t care about keyboard quality, or sponge he likes the keys, he may decide not to value my ratings (or aspects of them).

We don’t know whose bias is behind bot recipes, constellations, or other lists.

AI bots could harm the open web

Admit another prejudice. I’m a professional writer, and chatbots like Google and Bing show are an existential threat to anyone getting paid for their words. Most websites rely heavily on search as a source of traffic, and without those eyeballs, many publishers’ business models would be broken. No traffic means no ads, no ecommerce clicks, no income, no jobs.

Ultimately, some publishers may be forced out of business. Others may retreat behind paywalls, and still others may block Google and Bing from indexing your content. It exhausts your sources of information and reduces the credibility of your advice. And readers will have to pay more for quality content or compromise with less voice.

At the same time, it is clear that these bots are trained by indexing the content of human writers. Most of them were paid to work by publishers. So the reason the bot says Orion is the best constellation to see is because at some point I visited a site that looked like our sister site. space dot com Gather information about constellations. Since it’s a black box in how the model was trained, we don’t know exactly which websites led to which factual claims, but Bing’s citations give us some idea.

Whether what Google and Bing do constitutes plagiarism or copyright infringement is open to interpretation and may be decided in court.Getty Images now Sue Stable Diffusion, a company that generates AI images, for using 10 million images to train a model. It’s not hard to imagine big publishers following suit.

A few years ago, Amazon had an incredible Suspicion of copying goods We purchase from our own third-party sellers and manufacture our own Amazon branded items. This will naturally show up higher in internal searches. This sounds familiar.

It could be argued that Google’s LaMDA (which powers Bard) and Bing’s OpenAI engine are just doing the same thing that human creators do. They read primary sources and summarize them in their own words. But there are no human creators with the processing power or knowledge bases of these AIs.

It can also be argued that publishers need a better traffic source than Google. Most users, like us, are trained to treat search as their first destination on the Internet. In 1997, people went to Yahoo, browsed a directory of websites, found his website that matched their interests, bookmarked it, and came back. This was much like the audience model of television networks, magazines, and newspapers. Because most people had a few preferred sources of information that they relied on on a regular basis.

However, most Internet users are trained to access distribution platforms such as Google, Facebook, and Twitter and navigate the web from there. You can like and trust a publisher, but you’ll often visit her website with that publisher by finding it on Google or Bing, which can be accessed directly from her browser address bar. Even publishers and groups of publishers find it difficult to change this deeply ingrained behavior.

More importantly, if you are a consumer of information, you are treated like a bot and expected to absorb information without caring where it came from or whether it is trustworthy. It’s not a good user experience.

Note: As with all editorials, the opinions expressed here are solely those of the writer, not of Tom’s Hardware as a team.