Next-Generation Memory Modules Show Up at Computex

Dynamic random access memory is an integral part of every computer, and DRAM requirements such as performance, power, density, and physical implementation tend to change from time to time. New types of memory modules for laptops and servers will emerge in the next few years as traditional SO-DIMMs and RDIMMs/LRDIMMs appear to be reaching their limits in terms of performance, efficiency and density.

At Computex 2023 in Taipei, Taiwan, ADATA reportedly demonstrated candidates that could potentially replace SO-DIMMs and RDIMM/LRDIMMs in client and server machines over the next few years, respectively. tom’s hardware. These include at least Compression Attached Memory Modules (CAMMs) for ultrathin notebooks, compact desktops, and other small form factor applications. Multi-rank buffered DIMM (MR-DIMM) for servers. CXL memory expansion modules are for machines that need additional system memory at a lower cost than off-the-shelf DRAM.

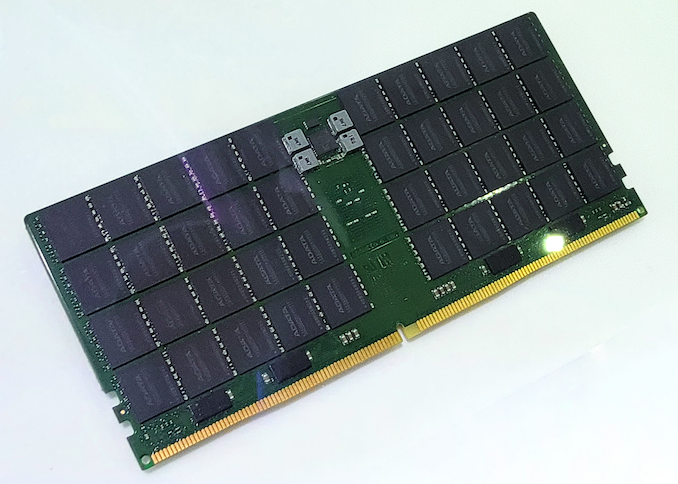

cam

of cam The specification will be finalized by JEDC in late 2023. Nevertheless, ADATA demonstrated a sample of such a module at the exhibition, highlighting its readiness to adopt the upcoming technology.

The main advantages of CAMM include shorter connections between memory chips and memory controllers (simplified topology, which allows for higher transfer speeds and lower costs); (LPDDR traditionally used point-to-point connections), dual-channel connections in a single module, increased DRAM density compared to double-sided SO-DIMMs, and reduced thickness.

Moving to an entirely new type of memory module would require a significant industry effort, but the benefits promised by CAMM probably justify the change.

Last year, Dell became the first PC manufacturer to adopt CAMM. Precision 7670 Notebook. On the other hand, ADATA’s CAMM module is very different from Dell’s version, which is not unexpected since Dell uses his pre-JEDEC standardized module.

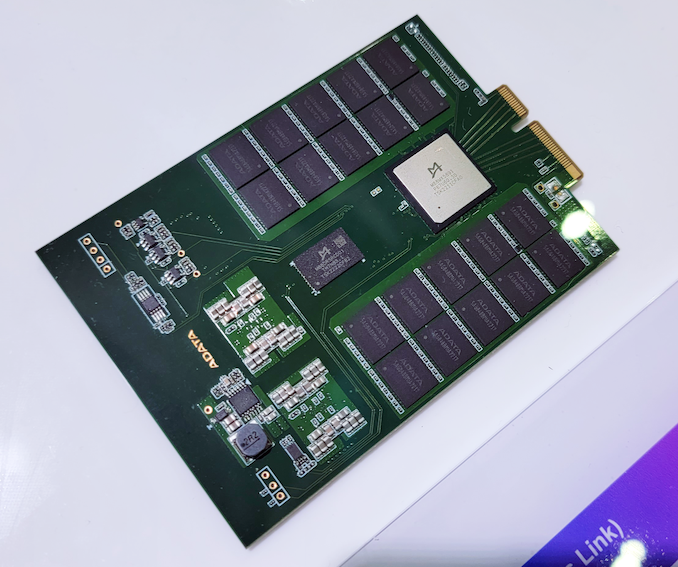

Mr. Dim

Data center grade CPUs are rapidly increasing the number of cores, so each generation must support more memory. However, cost, performance, and power consumption challenges make it difficult to rapidly increase DRAM device density. Therefore, the processor adds memory channels in addition to the number of cores, resulting in a large number of memory slots per CPU. More sockets and more motherboard complexity.

For this reason, the industry is developing two types of memory modules to replace the RDIMM/LRDIMM currently in use.

on the other hand, Multiplexed Combined Rank DIMM (MCR DIMM) A technology backed by Intel and SK Hynix, it is a dual-rank buffer memory module with a multiplexer buffer that fetches 128 bytes of data from both ranks, working simultaneously and working fast with the memory controller (8000 MT/ s). now). Such modules promise to improve performance and greatly simplify building dual-rank modules.

on the other hand, Multi-rank buffered DIMM (MR DIMM) Technologies believed to be supported by AMD, Google, Microsoft, JEDEC, Intel (at least based on information from ADATA). MR DIMMs use the same concept as MCR DIMMs, buffers that allow the memory controller to access both ranks simultaneously and interact with the memory controller at high data transfer rates. The spec promises to start at 8,800 MT/s in Gen1, evolve to 12,800 MT/s in Gen2, and spike to 17,600 MT/s in Gen3.

ADATA already offers MR DIMM samples that support 8,400 MT/s data transfer rates with 16GB, 32GB, 64GB, 128GB and 192GB DDR5 memory. According to ADATA, these modules will be supported by Intel’s Granite Rapids CPUs.

CXL memory

However, while both MR DIMMs and MCR DIMMs promise increased module capacity, some servers require large amounts of system memory at relatively low cost. Now such a machine would have to rely on Intel’s Optane DC persistent memory modules based on the now-defunct 3D XPoint memory that populate standard he DIMM slots. Still, the future will use memory on modules that feature the Compute Express Link (CXL) specification and are connected to the host CPU using the PCIe interface.

ADATA showcased a CXL 1.1 compliant memory expansion device with E3.S form factor and PCIe 5.0 x4 interface at Computex. The unit is designed to cost-effectively expand a server’s system memory using 3D NAND, with significantly lower latency compared to his state-of-the-art SSDs.

Image credit: toms hardware