OpenAI’s Shap-E Model Makes 3D Objects From Text or Images

Recently, we’ve seen AI models that turn detailed text into videos or run chatbots on phones. Now OpenAI, the company behind ChatGPT, has introduced Shap-E. This is a model that produces a 3D object that can be opened in Microsoft Paint 3D and even converted to an STL file that can be printed on one of the best 3D printers.

The Shape-E model is Free on GitHub (opens in new tab) It runs locally on your PC. Once all files and models have been downloaded, there is no need to ping the internet. Additionally, there are no usage charges as it does not require an OpenAI API key.

Actually running the Shape-E is a big challenge. OpenAI provides very few instructions and just tells you to install it using the Python pip command. However, the company doesn’t mention the dependencies required to make it work, and many of their latest versions just don’t work.

I finally installed Shap-E and found that accessing it through Jupyter Notebook was the default method. Jupyter Notebook allows you to view and run example code in small chunks to see how it works. Three samples demonstrating “text-to-3d” (using text prompts), “image-to-3d” (converting a 2D image to a 3D object), and “encode_model” taking an existing 3D model and using it I have a notebook. Convert to another using Blender (must be installed) and re-render. I tested the first two because the third (using his Blender on existing 3D objects) was beyond my skill set.

Shape-E Text-to-3D Appearance

Like so many AI models I’m testing these days, Shap-E is full of potential, but its current output is mediocre at best. I tried the text to video conversion with a few different prompts. Most of the time I got the objects I wanted, but the resolution was low and important details were missing.

Using the sample_text_to_3d notebook, I got two types of output. An animated GIF in color that you see in your browser, and his PLY file in black and white that you can later open in a program like Paint 3D. Animated GIFs have always looked much better than PLY files.

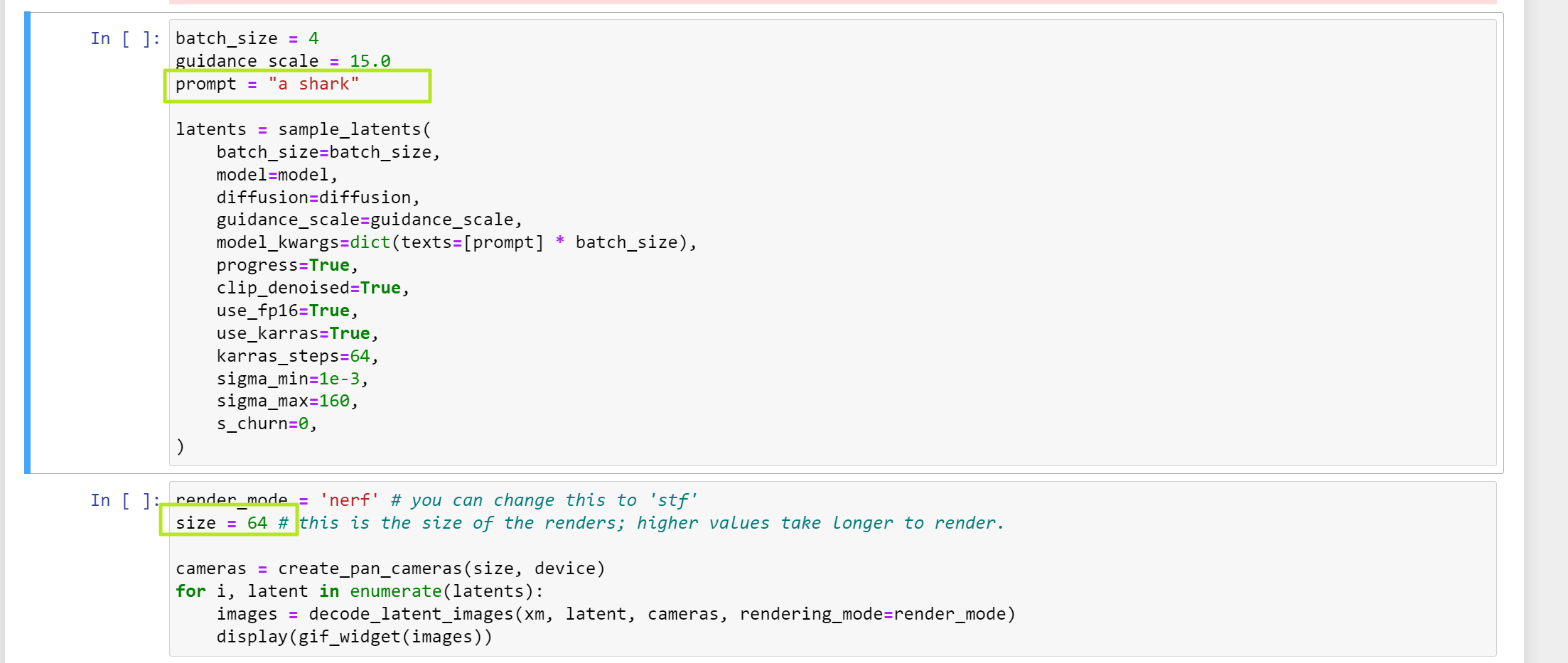

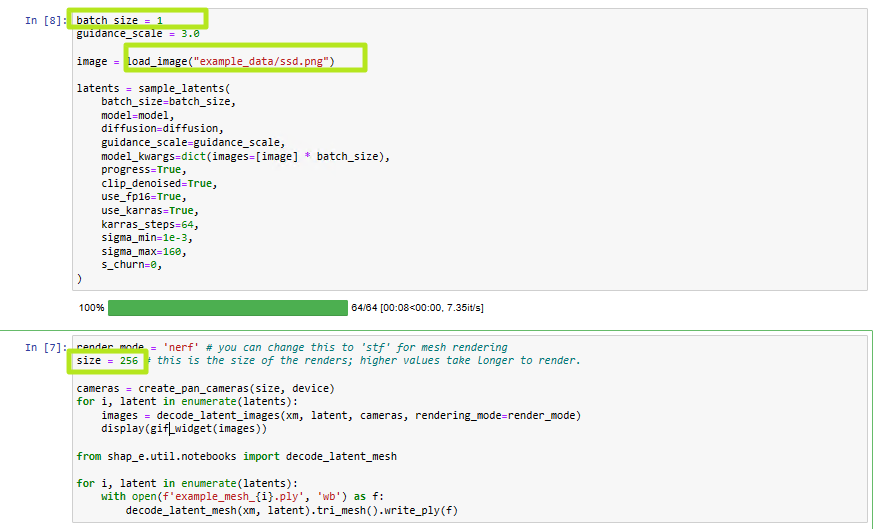

The default “shark” prompt looked fine as an animated GIF, but when I opened the PLY in Paint 3D, it seemed lacking. By default the notebook provides 4 64×64 animated GIFs, but I changed the code to increase the resolution to 256×256 and output it as a single GIF (all 4 GIFs look the same For).

When OpenAI asked for “a plane that looks like a banana” for one of its examples, we got some pretty good GIFs, especially when we bumped the resolution up to 256. of wing holes.

I asked for Mine Craft I got a Creeper, a GIF correctly colored green and black, and a PLY, which is the basic shape of a Creeper. However, his real Minecraft fans weren’t happy with this, and the geometry was too messy for 3D printing (when converted to STL).

Shape-E image to 3D object

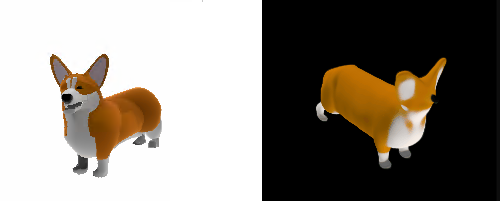

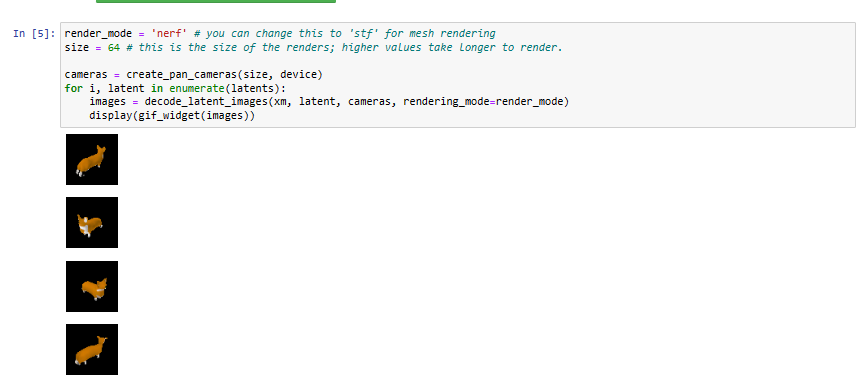

I also tried an image-to-3d script that can take an existing 2D image file and convert it to a 3D PLY file object. A sample illustration of a corgi dog turned out to be a decent low-res object and output as a rotating animated GIF. There are not many details. Below, the left is the original image and the right is the GIF. You can see that the eye appears to be missing.

By changing the code, I was also able to output a PLY 3D file that can be opened in Paint 3D. This is what it looked like.

I also tried putting some of my own images into the image-to-3d script, including a picture of a broken-looking SSD and a transparent PNG of the Tom’s Hardware logo that doesn’t look good.

However, a 2D PNG that looks a bit more 3D-ish (corgi-like) might give better results.

E type performance

Even when doing 3D processing from text or images, Shap-E required a lot of system resources. On my home desktop, with an RTX 3080 GPU and Ryzen 9 5900X CPU, it took about 5 minutes to finish rendering. On my Asus ROG Strix Scar 18 with RTX 4090 laptop GPU and Intel Core i9-13980HX it took 2-3 minutes.

However, when I tried a text-to-3D conversion on an old laptop with an Intel 8th Gen U-series CPU and integrated graphics, after an hour I was only getting 3% of the rendering done. In other words, if you use Shap-E, make sure you have an Nvidia GPU (Shap-E does not support GPUs from other brands, options are his CUDA and CPU). Otherwise it will take too long.

Note that the model needs to be downloaded the first time the script is run. This is 2-3 GB and may take several minutes to transfer.

How to install and run Shap-E on your PC

OpenAI posted Shape-E repository Go to GitHub, along with some instructions on how to run it. I created a dedicated Python environment using Miniconda and tried to install and run the software on Windows. But I kept running into problems, especially because I was unable to install Pytorch3D, a required library.

But when I decided to use WSL2 (Windows Subsystem for Linux), I got it up and running with very little effort. So the steps below will work for WSL2 on native Linux or Windows. I tested them with WSL2.

1. install miniconda Use Anaconda for Linux if you don’t already have one. For downloads and instructions, Konda site (opens in new tab).

2. Create a Conda environment called shape-e Python 3.9 installed (other versions of Python may also work).

conda create -n shap-e python=3.93. Enable Shape Environment.

conda activate shap-eFour. install py torchUse this command if you have an Nvidia graphics card.

conda install pytorch=1.13.0 torchvision pytorch-cuda=11.6 -c pytorch -c nvidiaIf you don’t have an Nvidia card, you’ll need to do a CPU-based installation. Installation is fast, but the actual 3D generation processing on the CPU was very slow in my experience.

conda install pytorch torchvision torchaudio cpuonly -c pytorchFive. Build Pytorch. This is an area where it took me hours to find a combination that works.

pip install "git+https://github.com/facebookresearch/pytorch3d.git"

If you get a cuda error, try running sudo apt install nvidia-cuda-dev And repeat the process.

6. Install Jupyter Notebook using conda.

conda install -c anaconda jupyter7. Duplicate shape report.

git clone https://github.com/openai/shap-eGit will create a shap-e folder under the folder you cloned from.

8. Please enter the shapeand run the install using pip.

cd shap-e

pip install -e .9. Launch a Jupyter notebook.

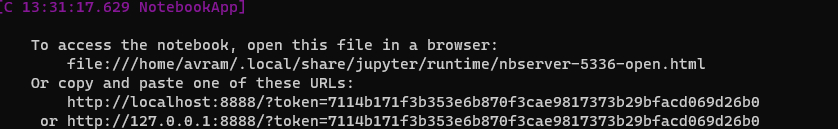

jupyter notebookTen. Navigate to the localhost URL displayed by the software..http://localhost:8888?token= will be your token. You will see a directory of folders and files.

11. see shape/example and double click sample_text_to_3d.ipynb.

A notebook opens showing different sections of code.

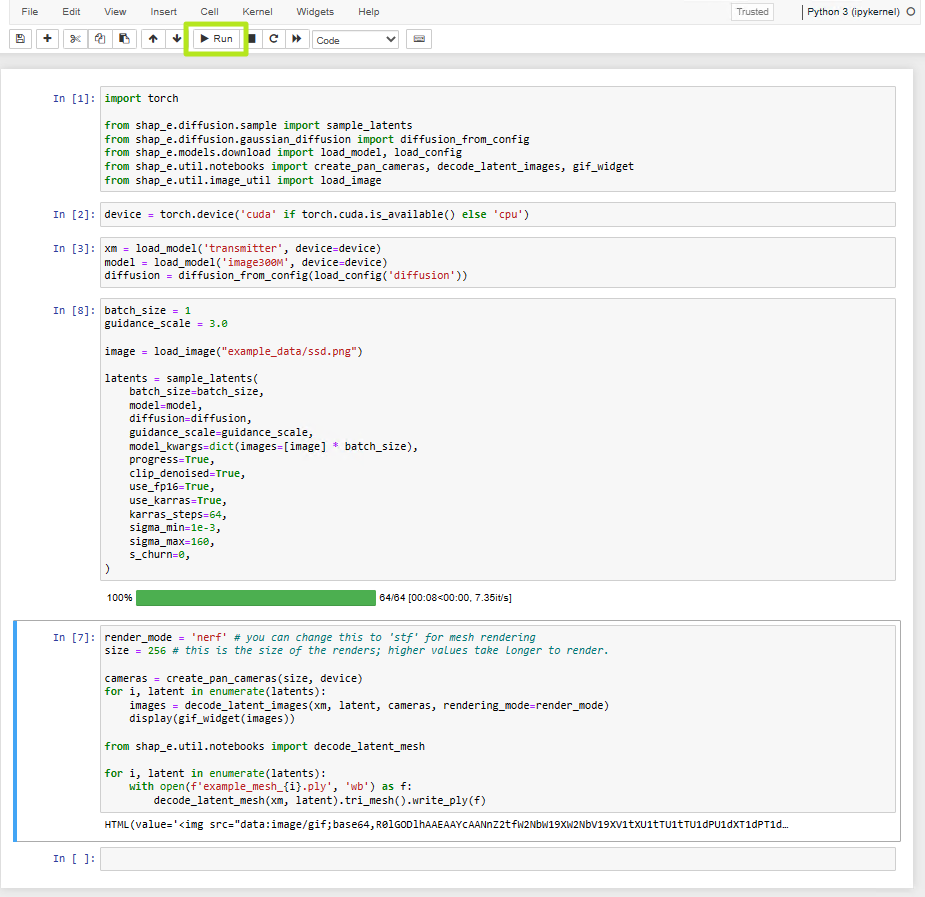

12. highlight each section and Click the run buttonwaiting for it to complete before proceeding to the next section.

![highlight each section,[実行]Click](https://cdn.mos.cms.futurecdn.net/PZUetWbUYtqapMRWEqVcdQ.png)

This process will download several large models to your local drive, so it will take some time the first time you run it. When all is done, you will see his 3D models of 4 sharks in your browser. There are also four .ply files in the examples folder that can be opened in a 3D imaging program such as Paint 3D. You can also convert it to an STL file using online converter (opens in new tab).

If you want to change the prompt, please try again. Refresh your browser and change “shark” to something else in the prompt section. Also, changing the size from 64 to a larger number will give you a higher resolution image.

13. Double-click sample_image_to_3d.ipynb. to the samples folder again so you can try out the image-to-3d script.

14. highlight each section and [実行]Click.

By default, four small images of corgis are displayed.

However, I recommend adding the following code to the last notebook section to output a PLY file and an animated GIF.

from shap_e.util.notebooks import decode_latent_mesh

for i, latent in enumerate(latents):

with open(f'example_mesh_{i}.ply', 'wb') as f:

decode_latent_mesh(xm, latent).tri_mesh().write_ply(f)15. Change image location Change the image in section 3. Also, I recommend changing the batch_size to 1 so you only have one image. Changing the size to 128 or 256 will give you a higher resolution image.

16. Create the following python script. and Save it as text-to-3d.py Or another name. You can generate a PLY file based on command line text prompts.

import torch

from shap_e.diffusion.sample import sample_latents

from shap_e.diffusion.gaussian_diffusion import diffusion_from_config

from shap_e.models.download import load_model, load_config

from shap_e.util.notebooks import create_pan_cameras, decode_latent_images, gif_widget

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

xm = load_model('transmitter', device=device)

model = load_model('text300M', device=device)

diffusion = diffusion_from_config(load_config('diffusion'))

batch_size = 1

guidance_scale = 15.0

prompt = input("Enter prompt: ")

filename = prompt.replace(" ","_")

latents = sample_latents(

batch_size=batch_size,

model=model,

diffusion=diffusion,

guidance_scale=guidance_scale,

model_kwargs=dict(texts=[prompt] * batch_size),

progress=True,

clip_denoised=True,

use_fp16=True,

use_karras=True,

karras_steps=64,

sigma_min=1e-3,

sigma_max=160,

s_churn=0,

)

render_mode="nerf" # you can change this to 'stf'

size = 64 # this is the size of the renders; higher values take longer to render.

from shap_e.util.notebooks import decode_latent_mesh

for i, latent in enumerate(latents):

with open(f'{filename}_{i}.ply', 'wb') as f:

decode_latent_mesh(xm, latent).tri_mesh().write_ply(f)

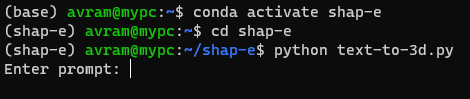

17. Run python text-to-3d.py and enter the prompt when the program asks for it.

This gives me a PLY output, but not a GIF. If you have some knowledge of Python, you can modify the script to do more.