Ampere Unveils 192-Core CPU, Then Offers Controversial Test Results

Ampere this week Ampere One processor For the industry’s first cloud data center with up to 132 general-purpose CPUs for AI inference.

The new chip draws more power than its predecessor, the Ampere Altra (which will remain in Ampere stable for at least some time), but the company promises up to 192 cores despite the higher power consumption. It claims that processors with , offer higher computational density than CPUs. From AMD and Intel. Some of these performance claims are controversial.

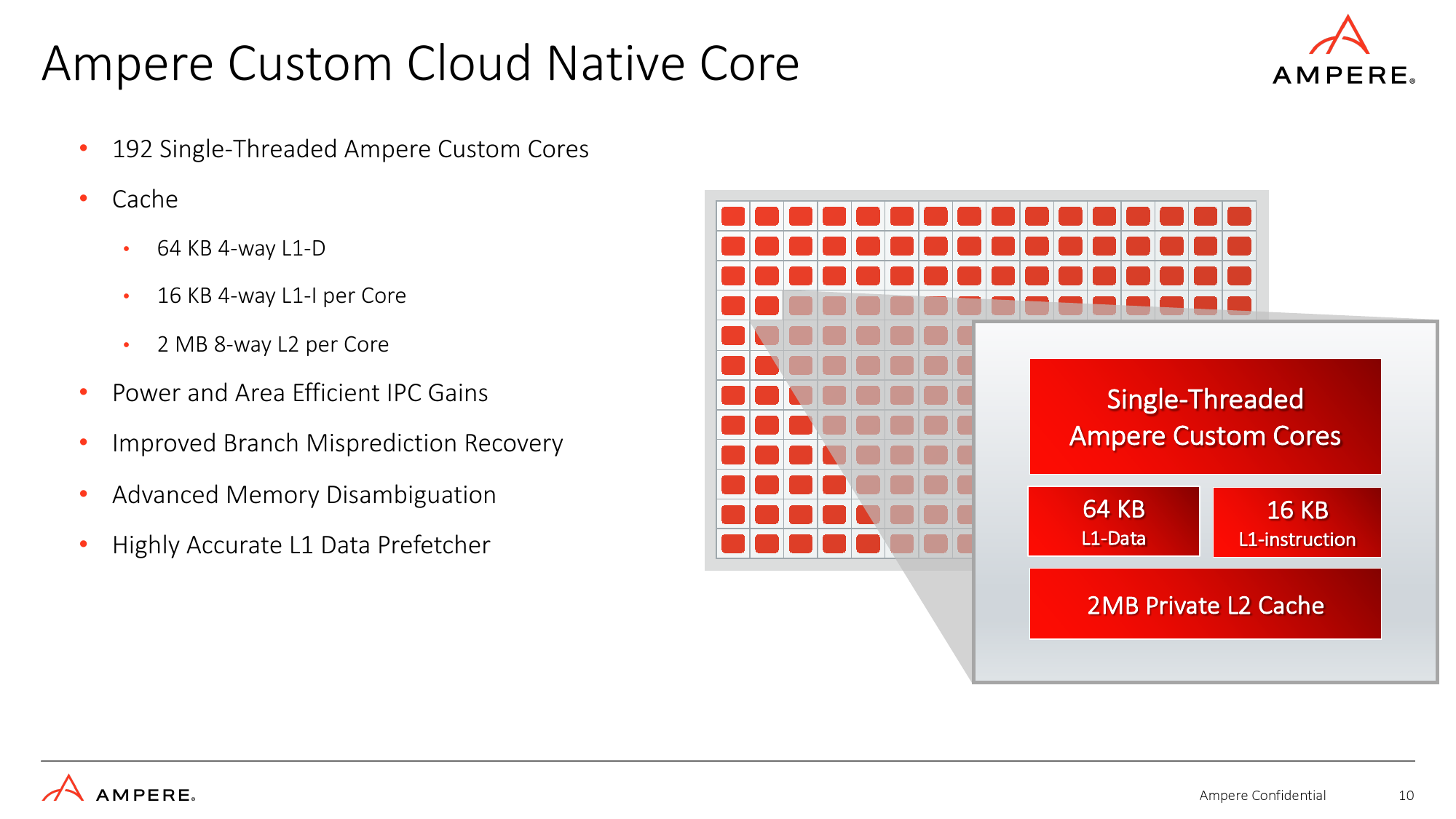

192 custom cloud native cores

Ampere’s AmpereOne processor features 136-192 cores (32-128 cores in Ampere Altra) running at up to 3.0 GHz and is powered by the company’s proprietary Armv8.6+ instruction set architecture (with two 128-bit vectors). Based on the implementation. FP16, BF16, INT16, and INT8 formats) with 2MB of 8-way set associativity L2 cache per core (up to 1MB) and a mechanical network with 64 home nodes and directory-based snooping. interconnected using filter. In addition to the L1 and L2 caches, the SoC also has 64MB of system level cache. The new CPUs are rated between 200W and 350W depending on the exact SKU, while the Ampere Altra is rated between 40W and 180W.

The company claims that the new cores are even more optimized for cloud and AI workloads, featuring “power and efficiency” improvements in instructions per clock (IPC). This probably means higher IPC (compared to his Neoverse N1 from Arm used in Altra) without any visible increase. in power consumption and die area. As for the die area, Ampere has not disclosed it, but has stated that the AmpereOne is built on one of TSMC’s 5nm class process technologies.

Ampere hasn’t revealed all the details of the AmpereOne core, but the high-precision L1 data prefetcher (reducing latency, ensuring less time for the CPU to wait for data, and minimizing memory accesses helps the system power consumption). Sophisticated branch misprediction recovery (the faster the CPU can detect and recover from a branch misprediction, the less latency and less power wasted), and advanced memory disambiguation (IPC , minimizes pipeline stalls, maximizes out-of-order execution, reduces latency, and improves handling of multiple read/write requests in virtualized environments).

The list of improvements to the AmpereOne core architecture doesn’t look that long on paper, but these improvements can actually improve performance significantly, and a lot of research had to be done ( In other words, what is the biggest performance killer for cloud data center CPUs?). It takes a lot of work to implement them efficiently.

Advanced security and I/O

As AmpereOne SoCs are targeted at cloud data centers, they are equipped with appropriate hardware including 8 DDR5 channels for up to 16 modules supporting up to 8 TB of memory per socket, 128 lanes of PCIe Gen5 with 32 controllers and x4 bifurcations. Equipped with I/O.

Data centers also require certain reliability, availability, serviceability (RAS), and security features. To that end, the SoC fully supports ECC memory, single-key memory encryption, memory tagging, secure virtualization, and nested virtualization, to name a few. Additionally, AmpereOne has a number of security features, including cryptographic and entropy accelerators, speculative side-channel attack mitigation, and ROP/JOP attack mitigation.

interesting benchmark results

Ampere’s AmpereOne SoC is undoubtedly an impressive silicon with 192 general-purpose cores, the industry’s first, designed to handle cloud workloads. But to prove the point, Ampere uses some pretty interesting benchmark results.

Ampere believes AmpereOne’s computational density is a key advantage. The company claims that a single 42U 16.5kW rack with a 192-core AmpereOne SoC-based 1S machine can support up to 7926 virtual machines, compared to 2496 for AMD’s 96-core EPYC 9654 ‘Genoa’-based rack. VMs and powered racks. Intel’s 56-core Xeon Scalable 8480+ ‘Sapphire Rapids’ CPU can handle 1680 VMs. This comparison makes a lot of sense with a power budget of 16.5kW.

However, the power density of 42U racks is on the rise, and exascalers like AWS, Google, and Microsoft are poised to keep up with this, especially for performance-demanding workloads.based on a survey from Uptime Institute By 2020, it can be said that 16% of enterprises have deployed standard 42U racks with rack power densities ranging from 20kW to 50kW or more. As the TDPs of AMD’s latest and previous generation CPUs have improved over their predecessors, the number of typical deployments using 20kW racks is now increasing rather than decreasing.

In terms of performance, Ampere offers 512 GB of memory running Generative AI (Stable Diffusion) and AI Recommenders (DLRM) for a system based on AMD’s 96-core EPYC 9654 CPU with 256 GB of memory. demonstrating the benefits of a 160-core AmpereOne-based system with memory (meaning it ran in 8-channel mode instead of the 12-channel mode supported by Genoa). The Ampere-based machine produced 2.3x more frames/sec for generative AI and more than 2x more queries/sec for AI recommendations.

In this case, Ampere compared the performance of the system processing the data at FP16 precision, while the AMD-based machine calculated at FP32 precision, which is not an identical comparison. Additionally, many of his FP16 workloads now run on GPUs rather than CPUs, and massively parallel GPUs tend to provide great results on generative AI and AI recommendation workloads.

summary

Ampere’s AmpereOne is the industry’s first general-purpose CPU with up to 192 cores, which certainly deserves a lot of respect. These CPUs also offer robust I/O capabilities, advanced security features, and can be expected to improve Instructions Per Clock (IPC) gains. It can also run AI workloads with FP16, BF16, FP8, and INT8 precision.

However, the company has opted to use a rather controversial method to prove its point in terms of benchmark results, which casts a shadow over its performance. That said, it would be particularly interesting to see independent test results for AmpereOne-based servers.